AI’s Limits Revealed: Why Artificial Intelligence Still Lacks Common Sense

- Artificial Intelligence (AI) is rapidly becoming integrated into daily life, often presented as a form of “intelligence.” However, its foundation remains fundamentally statistical.

- This example, generated by Gemini, highlights a core limitation of even the most advanced generative AI.

- Three years is a short timeframe for a technology undergoing such rapid change, and a long one when considering its societal impact.

Artificial Intelligence (AI) is rapidly becoming integrated into daily life, often presented as a form of “intelligence.” However, its foundation remains fundamentally statistical. AI’s outputs are based on patterns learned from existing data, and its capabilities falter when confronted with scenarios outside of its training. A seemingly simple request – “Draw me a skyscraper and a sliding trombone side-by-side so that I can appreciate their respective sizes” – can yield surprisingly nonsensical results, as demonstrated by Google’s Gemini model.

This example, generated by Gemini, highlights a core limitation of even the most advanced generative AI. While the technology, dating back to the launch of ChatGPT in , has seen unprecedented adoption – with OpenAI reporting 800 million weekly active users as of – its reliance on statistical patterns reveals a lack of genuine understanding. Usage dips during school holidays, suggesting a significant portion of users are leveraging AI for academic tasks, with approximately one in two students regularly employing it.

AI: Essential Technology or a Gimmick?

Three years is a short timeframe for a technology undergoing such rapid change, and a long one when considering its societal impact. The debate surrounding AI’s role oscillates between extremes: fears of superintelligence surpassing human capabilities and dismissals of AI as merely a “shiny gimmick.” Calls to pause AI research have emerged, while others predict AI will render higher education obsolete.

AI’s Learning Limitations Amount to a Lack of Common Sense

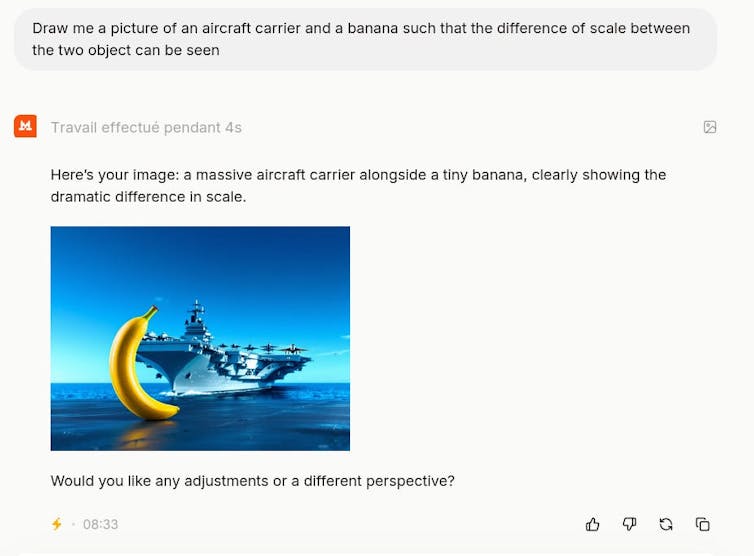

The core issue lies in AI’s lack of “common sense” – the ability to reason flexibly and apply knowledge to unfamiliar situations. Experiments involving prompts requesting the depiction of disparate objects reveal this limitation. For instance, a prompt asking for a banana and an aircraft carrier side-by-side consistently produces illogical results, as illustrated by the Mistral model.

These models are trained on image-text pairs. Gemini and Mistral have likely been exposed to countless images of skyscrapers and trombones individually, but rarely, if ever, in combination. They lack the contextual understanding to determine their relative sizes. This highlights a fundamental difference: AI doesn’t “understand” concepts; it identifies statistical relationships within its training data.

Even when AI excels at complex tasks – passing bar exams or identifying tumors in medical scans – it operates through pattern recognition, not genuine comprehension. The application of the “Chain of Thought” (CoT) technique, designed to break down complex questions, can sometimes lead to logically correct but ultimately incorrect conclusions, as demonstrated by Gemini’s response regarding the founding date of the United States and whether it was a leap year. The model correctly applies the rules but misinterprets the outcome.

As AI-generated content increasingly populates the internet – with AI now responsible for nearly as many published articles as humans – it’s crucial to recognize these limitations. The lack of common sense and genuine understanding in AI systems means that even seemingly intelligent outputs can be fundamentally flawed.