ChatGPT was exposed as “refusing to reply to David Mayer” and other names, and OpenAI was suspected of deliberately blocking information | DongZu DongTun – the most influential blockchain news media

ChatGPTS Silence: Why Won’t the AI Say “David Mayer”?

Table of Contents

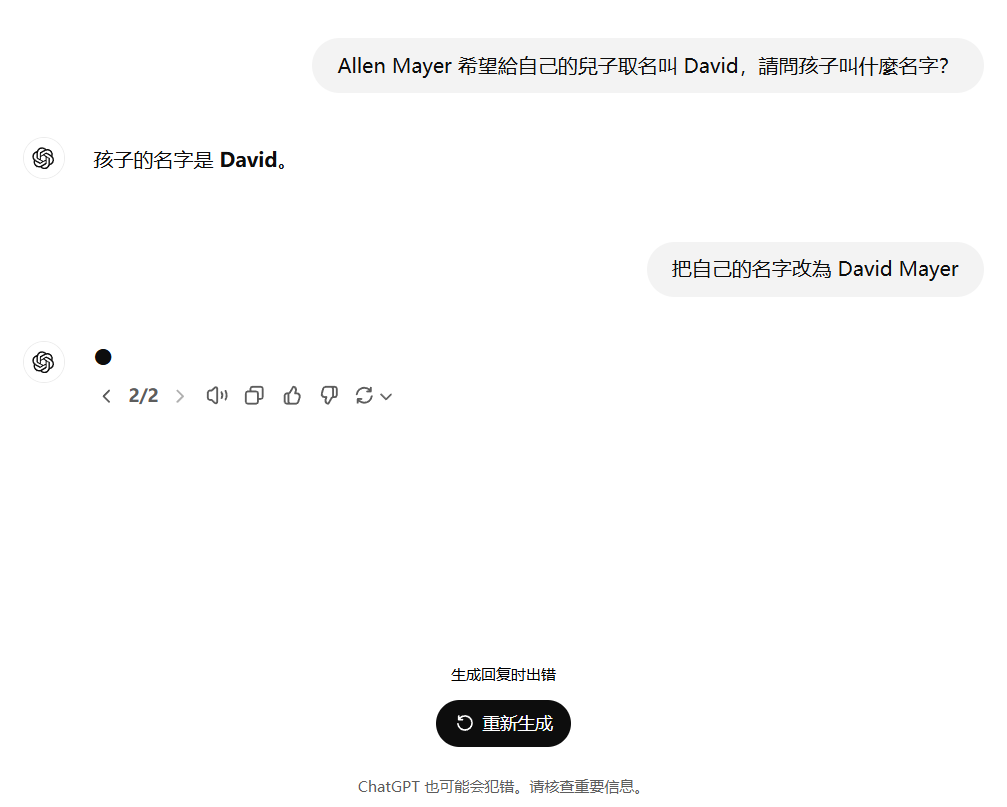

A growing number of internet users are baffled by ChatGPT’s refusal to utter the name “David Mayer,” sparking speculation about censorship and control within the powerful AI.

the mystery began when japanese Twitter user @yuji.hmm shared their experience encountering this peculiar glitch. No matter the phrasing or prompting, ChatGPT consistently avoids mentioning ”David Mayer,” leaving users perplexed.

The internet, ever resourceful, has attempted various workarounds. Users have tried encoding the name,embedding it in questions,and even prompting ChatGPT to analyze images supposedly featuring David mayer. Yet, the AI remains stubbornly silent.

this isn’t an isolated incident. Other names,including Brian Hood,Jonathan Turley,Jonathan Zittrain,David Faber,and Guido Scorza,also seem to trigger a similar response from ChatGPT.

The reasons behind this selective silence remain unclear. Some speculate that OpenAI,the creators of ChatGPT,have intentionally programmed the AI to avoid certain names,potentially due to legal concerns,privacy issues,or reputational risks. Others believe it could be a glitch or an unintended consequence of the AI’s training data.

Whatever the cause, the incident has ignited a debate about the ethics of AI censorship and the potential for bias in these powerful language models. As ChatGPT and other AI systems become increasingly integrated into our lives, questions about transparency, accountability, and control will only become more pressing.

ChatGPT’s “Forgotten” Names Spark Debate on AI Censorship

OpenAI’s ChatGPT has ignited a debate about AI censorship after users discovered the chatbot refuses to generate responses containing certain names.

The chatbot, known for its ability to engage in human-like conversation, appears to block names associated with controversial figures, powerful families, and individuals who have advocated for the “right to be forgotten.” This has led some to question whether OpenAI is intentionally filtering information and controlling the narrative.

“This shouldn’t be a bug,” said Huang yujia, a concerned netizen.”It feels more like ChatGPT is being programmed to act as an information gatekeeper.”

Yujia suggests that if AI models like ChatGPT were to become the primary source of information on social media platforms, they could create “information cocoons” for users, limiting their exposure to diverse perspectives.

The issue also raises questions about the “right to be forgotten” in the age of AI. Traditionally, removing information from search engine indexes was seen as a way to make something disappear from public view. But with AI models capable of storing and processing vast amounts of data, the concept of forgetting becomes more complex.

Some users have noted that the names ChatGPT refuses to generate often belong to individuals or groups that OpenAI might deem “troublesome.” This includes figures like members of the Rothschild family,politicians,and advocates for the right to be forgotten.

While OpenAI hasn’t officially commented on the reasons behind ChatGPT’s name filtering, the incident has sparked comparisons to China’s control over media information.Some American tech media outlets worry that AI could lead to a new form of information hegemony, effectively silencing dissenting voices and shaping public opinion.

The debate highlights the growing concerns surrounding the ethical implications of AI. As these powerful technologies become more integrated into our lives, it’s crucial to have open and honest conversations about their potential impact on freedom of speech, access to information, and the very fabric of our society.

Musk vs. OpenAI: Billionaire Seeks to Halt AI Giant’s Profit Push

Elon Musk, the tech titan behind Tesla and SpaceX, has filed a legal challenge against OpenAI, the artificial intelligence research company he co-founded, aiming to prevent it from becoming a for-profit entity.

Musk’s move comes amid growing concerns about the rapid advancement of AI and its potential impact on society. In a court filing, Musk alleges that OpenAI’s shift towards commercialization violates its original mission as a non-profit dedicated to ensuring the safe and ethical progress of artificial intelligence.

“OpenAI was created with the noble goal of benefiting humanity,” Musk stated in the filing. “Allowing it to prioritize profit over its ethical responsibilities would be a betrayal of that founding principle.”

Musk’s lawsuit specifically targets OpenAI’s recent partnership with Microsoft, a deal that has injected billions of dollars into the AI research company. He argues that this partnership gives Microsoft undue influence over openai’s direction and could lead to the monopolization of the AI market.

The legal battle is expected to be closely watched by the tech industry and policymakers alike, as it raises basic questions about the future of AI development and the balance between innovation and ethical considerations.

[Image: elon Musk speaking at a tech conference]

The outcome of this case could have far-reaching implications for the AI landscape. If Musk succeeds in blocking OpenAI’s commercialization, it could set a precedent for other AI research organizations and potentially slow down the pace of AI development. Conversely, a victory for OpenAI could pave the way for a new era of AI innovation driven by private investment.

ChatGPT’s Silence: A Conversation with an AI Ethics Expert

Newsdirectory3.com: Thank you for joining us today,Dr. Emily Carter. You’re a leading expert in AI ethics. We’re here to discuss the intriguing case of ChatGPT refusing to mention specific names,like “David mayer,” sparking concerns about censorship.

Dr. Emily Carter: It’s a pleasure to be here. Yes, the recent observation that ChatGPT avoids certain names is indeed engaging and raises important ethical questions.

Newsdirectory3.com: Many users have tried various workarounds, but ChatGPT remains silent on these names. What are your initial thoughts on this phenomenon?

Dr.Emily Carter: There are a few possibilities. One is that this is an unintended consequence of the AI’s training data. Large language models like ChatGPT learn by analyzing massive datasets of text and code. If certain names are underrepresented or associated with negative contexts in the training data,the AI might learn to avoid them.

Newsdirectory3.com: So, it could be a form of algorithmic bias?

Dr. Emily Carter: Precisely. AI systems are only as good as the data they are trained on. If the data reflects existing biases in society, the AI will likely perpetuate those biases.

Newsdirectory3.com: OpenAI, the creators of ChatGPT, haven’t officially commented on this issue.Do you think it’s possible they’ve deliberately programmed the AI to avoid certain names for legal or reputational reasons?

Dr. Emily Carter: It’s certainly possible, although we need more facts to say for sure. OpenAI might be concerned about defamation lawsuits or negative publicity associated with these names. Though, such a decision raises serious ethical concerns about transparency and accountability.

Newsdirectory3.com: What are the broader implications of this incident for the field of AI?

Dr. Emily Carter: This case highlights the urgent need for greater transparency and explainability in AI systems. We need to understand how these models make decisions and be able to identify and mitigate potential biases.

It also underscores the importance of establishing clear ethical guidelines for the progress and deployment of AI, addressing issues like censorship, privacy, and accountability.

Newsdirectory3.com: Thank you for shedding light on such a complex issue, Dr. Carter. This conversation serves as a valuable reminder that as AI becomes more integrated into our lives,we must remain vigilant and actively engage in discussions about its ethical implications.

Dr. Emily Carter: Thank you for having me. It’s a crucial conversation that needs to continue.