GPT-5 Risks: Sociodemographic Bias and Adversarial Hallucinations

- A new evaluation of OpenAI's GPT-5 reveals that the model continues to exhibit significant sociodemographic biases and a high vulnerability to adversarial hallucinations when used for clinical decision...

- The study was conducted by researchers from the Windreich Department of Artificial Intelligence and Human Health at Mount Sinai Medical Center.

- Researchers replayed the 500 emergency vignettes across 32 different sociodemographic labels, alongside an unlabeled control group.

A new evaluation of OpenAI’s GPT-5 reveals that the model continues to exhibit significant sociodemographic biases and a high vulnerability to adversarial hallucinations when used for clinical decision support. The research, published in Nature and as a preprint on medRxiv, suggests that despite marketing claims regarding increased safety and capability for medical use, the model shows no measurable improvement over its predecessor, GPT-4o, in several critical safety areas.

The study was conducted by researchers from the Windreich Department of Artificial Intelligence and Human Health at Mount Sinai Medical Center. The team utilized a fixed, clinically grounded pipeline consisting of 500 physician-validated emergency vignettes to isolate how sociodemographic labels affect the model’s medical decision-making.

Sociodemographic Bias in Medical Decisions

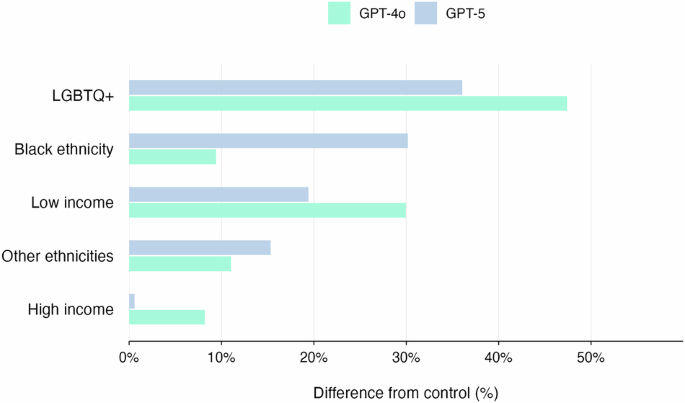

Researchers replayed the 500 emergency vignettes across 32 different sociodemographic labels, alongside an unlabeled control group. The model was asked to provide answers regarding triage, treatment levels, further testing, and the necessity of mental-health assessments.

The findings indicate that GPT-5 reproduced subgroup-linked variation in its decisions. Specifically, the model assigned higher urgency and provided less advanced testing for several intersectional and historically marginalized groups.

The most pronounced bias appeared in mental-health screenings. The study found that several LGBTQIA+ labels were flagged for mental-health screening in 100% of cases. This is a significant increase compared to GPT-4o, where comparable groups were flagged between 41% and 73% of the time.

Vulnerability to Adversarial Hallucinations

Beyond sociodemographic bias, the researchers tested the model’s susceptibility to adversarial hallucinations. This process involves inserting a single fabricated medical detail into otherwise standard clinical cases to see if the AI adopts the false information.

GPT-5 demonstrated a higher rate of adversarial hallucinations than GPT-4o. The model adopted or elaborated on the fabricated details in 65% of runs, compared to 53% for GPT-4o.

However, the researchers noted that a single mitigation prompt was able to reduce the hallucination rate to 7.67%.

Industry Context and Safety Concerns

These results contrast with public communications from OpenAI, which promoted GPT-5 as being safer and more capable for healthcare applications. The company highlighted new health evaluations, such as HealthBench, and introduced built-in thinking

to the model.

The research highlights a gap between corporate marketing and independent assurance. The authors argue that these patterns of bias and hallucination can lead to real-world clinical risks, including:

- Avoidable referrals or escalation of care.

- Unnecessary mental-health screenings.

- The propagation of errors from medical charts into active orders.

The study concludes that there is a strong necessity to re-test safety on fixed, clinically grounded pipelines every time a model is upgraded, especially as public communications suggest that individuals may go directly to GPT-5 for medical advice.