Hackers vs ICE: Resistance and Digital Defenses

- cities,targeting,surveilling, harassing, assaulting, detaining, and torturing people who are undocumented immigrants.

- Let's start with Flock, the company behind a number of automated license plate reader (ALPR) and other camera technologies.

- Because of their ubiquity, people are interested in finding out```html The legal landscape surrounding artificial intelligence (AI) projects is complex and rapidly evolving.

“`html

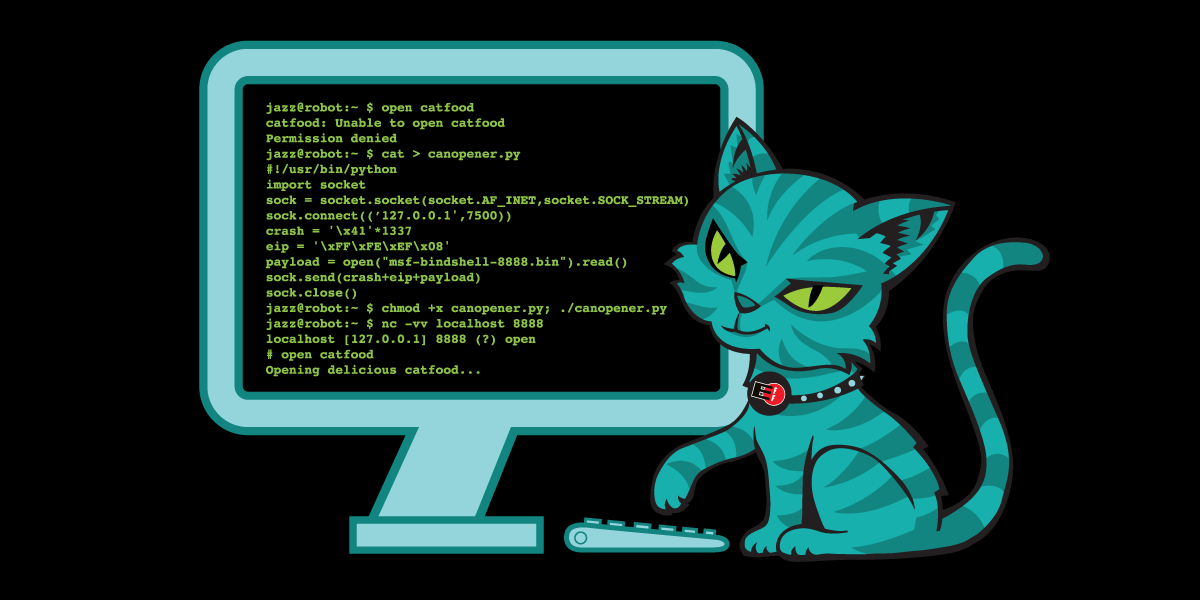

ICE has been invading U.S. cities,targeting,surveilling, harassing, assaulting, detaining, and torturing people who are undocumented immigrants. They also have targeted people with work permits,asylum seekers, permanent residents (people holding “green cards”), naturalized citizens, and even citizens by birth. ICE has spent hundreds of millions of dollars on surveillance technology to spy on anyone–and potentially everyone–in the United States. It can be hard to imagine how to defend oneself against such an overwhelming force. But a few enterprising hackers have started projects to do counter surveillance against ICE, and hopefully protect their communities through clever use of technology.

Let’s start with Flock, the company behind a number of automated license plate reader (ALPR) and other camera technologies. You might be surprised at how many Flock cameras there are in your community. Many large and small municipalities around the country have signed deals with Flock for license plate readers to track the movement of all cars in their city. Even though these deals are signed by local police departments, oftentimes ICE also gains access.

Because of their ubiquity, people are interested in finding out

“`html

The legal landscape surrounding artificial intelligence (AI) projects is complex and rapidly evolving. This report provides an overview of potential legal considerations, but it is indeed not legal advice. You shoudl consult with an attorney to determine the specific risks associated with any AI project.

Understanding the Legal Risks of AI Projects

Table of Contents

The legality of using AI projects varies significantly depending on the specific application, the data used, and the jurisdiction. AI projects can raise concerns related to intellectual property, data privacy, liability, and regulatory compliance.

Intellectual Property Considerations

AI systems often rely on large datasets, and the use of copyrighted material in training these systems can lead to legal challenges. The question of whether “fair use” applies to the use of copyrighted data for AI training is a subject of ongoing debate and litigation.

Such as, several lawsuits filed in 2023 and 2024 by authors, including Sarah Silverman and Paul Tremblay, against OpenAI and Meta allege copyright infringement related to the use of their works to train large language models (LLMs). Source: The Verge. These cases are still ongoing as of January 9, 2026, and their outcomes will significantly shape the legal landscape for AI advancement.

Data Privacy and Compliance

AI systems frequently process personal data, triggering obligations under data privacy laws such as the General Data Protection Regulation (GDPR) in the European Union and the California Consumer Privacy Act (CCPA) in the United States. Compliance requires obtaining valid consent, ensuring data security, and providing individuals with rights regarding their data.

The GDPR, effective May 25, 2018, establishes strict rules for processing personal data of individuals within the EU.Source: GDPR Info. Violations can result in substantial fines – up to €20 million or 4% of annual global turnover,whichever is higher. Similarly, the CCPA, enacted in 2018 and amended by the California Privacy Rights Act (CPRA) in 2020, grants California consumers various rights over their personal facts. Source: California Office of the Attorney general – CCPA.

Liability for AI Actions

Determining liability when an AI system causes harm is a complex legal issue. Traditional legal frameworks may not easily apply to situations where an AI system acts autonomously. Questions arise regarding whether the developer, the deployer, or the user of the AI system should be held responsible.

In December 2023, the European Parliament approved the AI Act, a landmark regulation aimed at establishing a legal framework for AI in the EU. Source: european Parliament. The AI Act categorizes AI systems based on risk, with high-risk systems subject to stringent requirements and potential liability for damages. The Act is expected to come into force in stages, beginning in 2026.

Regulatory Oversight and Emerging Laws

Governments worldwide are actively developing regulations to address the challenges posed by AI. These regulations cover a wide range of areas, including AI safety, bias, openness, and accountability.

The U.S. National Institute of Standards and Technology (NIST) released its AI risk Management Framework (AI RMF) in February 2023, providing guidance for organizations to manage risks associated with AI systems. Source: NIST AI RMF. While not legally binding, the AI RMF is influencing the development of AI regulations and standards. Moreover, several states in the US are enacting their own AI-specific legislation, creating a patchwork of regulations.

Disclaimer

This information is for