How LLMs Work: A Guide to Building One From Scratch

- Educational resources are emerging to demystify the internal mechanics of generative AI, moving developers away from treating Large Language Models (LLMs) as opaque tools.

- The central resource for this approach is the book Build a Large Language Model (From Scratch), published by Manning.

- To support the written instruction, an official code repository is available on GitHub under the handle rasbt/LLMs-from-scratch.

Educational resources are emerging to demystify the internal mechanics of generative AI, moving developers away from treating Large Language Models (LLMs) as opaque tools. Sebastian Raschka has developed a series of instructional materials, including a book and a comprehensive course, designed to teach developers how to build a GPT-like LLM from the ground up.

The central resource for this approach is the book Build a Large Language Model (From Scratch)

, published by Manning. The text is designed to take users inside the AI black box

to tinker with the internal systems that power generative AI, providing an understanding of how these models function, their inherent limitations, and available customization methods.

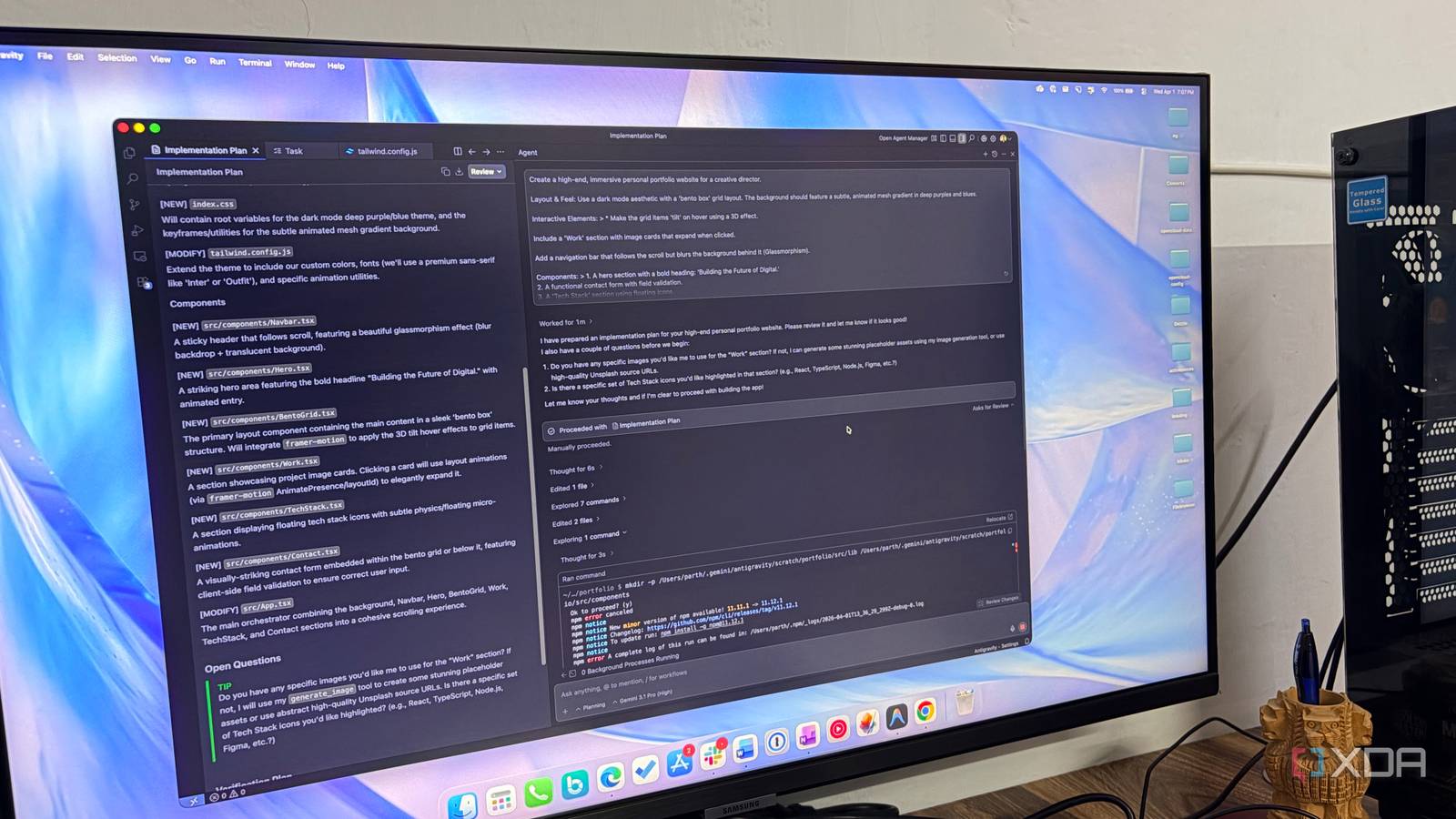

To support the written instruction, an official code repository is available on GitHub under the handle rasbt/LLMs-from-scratch

. This repository contains the code required for the development, pretraining, and finetuning of a GPT-like LLM.

Technical Implementation and Learning Path

The methodology described in these resources mirrors the approach used to create large-scale foundational models, such as those behind ChatGPT. However, the focus is on creating small-but-functional models specifically for educational purposes, allowing developers to understand the process without the prohibitive cost and complexity of large-scale industrial models.

The learning path covers several key stages of LLM creation:

- Coding the model architecture from scratch to understand how tokens and text generation operate.

- The process of pretraining the model to learn patterns and grammar.

- The implementation of finetuning to adapt the model for specific tasks.

- The technical process of loading weights from larger pretrained models to facilitate further tuning.

By building the model step by step, developers can see how text generation functions similarly to the autocomplete features found on mobile devices, but on a much more complex scale.

Evolution of Instructional Content

The educational offering has expanded from short-form workshops into a more detailed curriculum. On August 31, 2024, a three-hour coding workshop titled Building LLMs from the Ground Up

was shared to introduce the basics of the process.

Following the workshop, a more extensive course was released on May 10, 2025. This course provides approximately 15 hours of content, expanding on the foundational concepts introduced in the earlier workshop. The video components of this course were originally developed as supplementary material for the Manning book but are also available as standalone content.

This shift toward “from scratch” education addresses a growing demand among developers to understand the actual code and mathematical structures underlying AI, rather than relying solely on high-level APIs or pretrained model interfaces.