In the Era of AI Agents, Company’s AI Strategy

Agentic AI: Transforming industries and Redefining business Strategies

Table of Contents

The rapid evolution of artificial intelligence is ushering in what many are calling the “era of Agentic AI.” Jensen Huang,CEO of NVIDIA,highlighted this shift at CES 2025,noting that AI is moving beyond simple generation of images,text,and sound to a stage where it can infer,plan,and execute tasks autonomously. This progression extends even further, wiht some experts already discussing the emergence of “Physical AI.”

AI technology has undergone critically important advancements in the last decade. Following its resurgence in 2012 with deep learning, AI evolved into “Perception AI,” capable of recognizing objects. The launch of ChatGPT in late 2022 marked another pivotal moment, paving the way for the current era of Agentic AI.

The Shift from Generative to Agentic AI

Agentic AI distinguishes itself from generative AI through its capacity for autonomous problem-solving. Unlike generative AI, wich excels at creating content based on user prompts and vast datasets, Agentic AI can independently plan, execute, and adapt to solve complex problems. this distinction, highlighted in a February 2025 Forbes article, positions Agentic AI as “The Autonomous Problem-Solver,” complementing generative AI’s role as ”The Creative Powerhouse.”

the synergy between these AI types offers significant potential across various sectors. In manufacturing, Agentic AI can analyze data to optimize processes and develop execution plans, while generative AI can create reports or visualize simulation results.

Details

- Generative AI

- Creates content based on learned data according to instructions.

- Agentic AI

- Solves complex problems effectively with autonomous plans and execution.

This capability is expanding AI applications in manufacturing, services, logistics, environmental management, and defense. In manufacturing, Agentic AI, integrated with industrial IoT data, can detect anomalies and predict maintenance needs. In the service sector, it enables interactive self-service through knowledge bases, resolving issues like account access and password resets. Logistics benefits from physical agents combining computer vision and robotics to automate goods identification, classification, and packaging. The environmental sector is leveraging AI platforms to predict and manage carbon emissions and promote sustainability.

GPU Utilization in AI Development

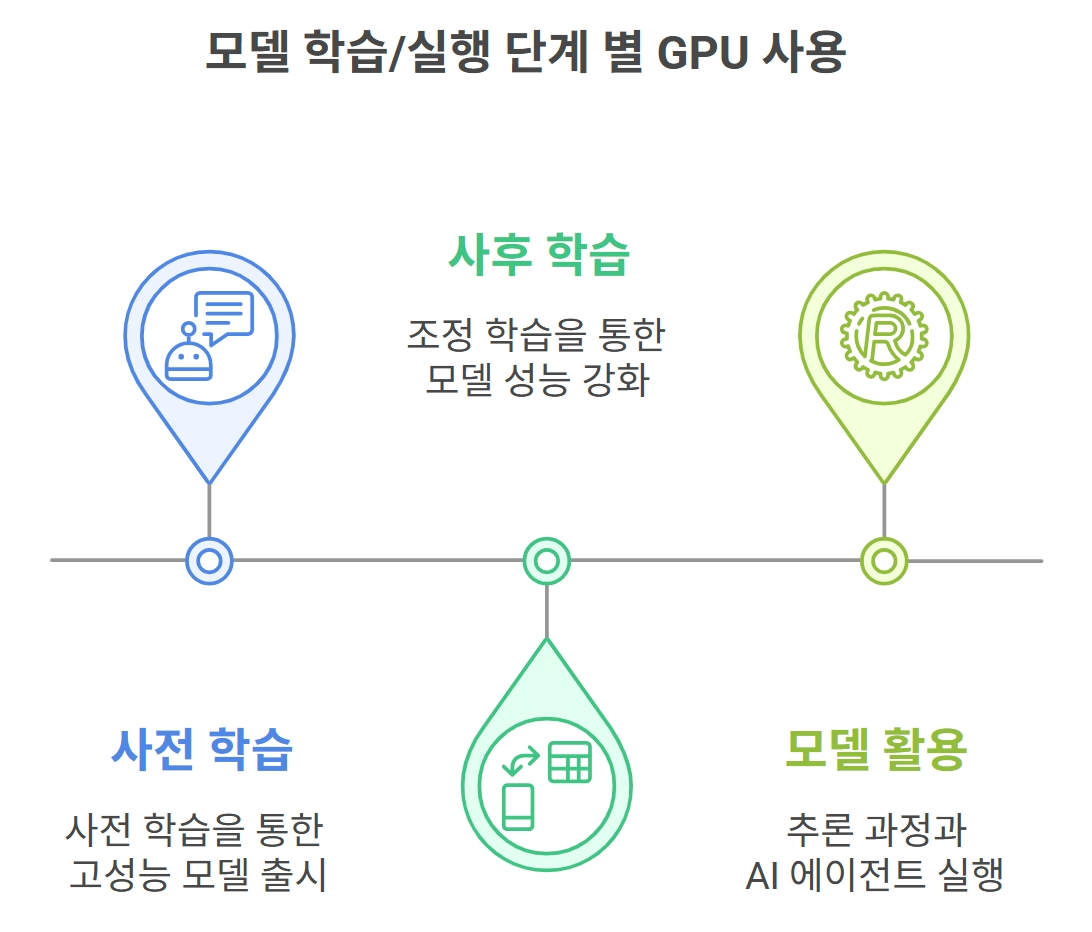

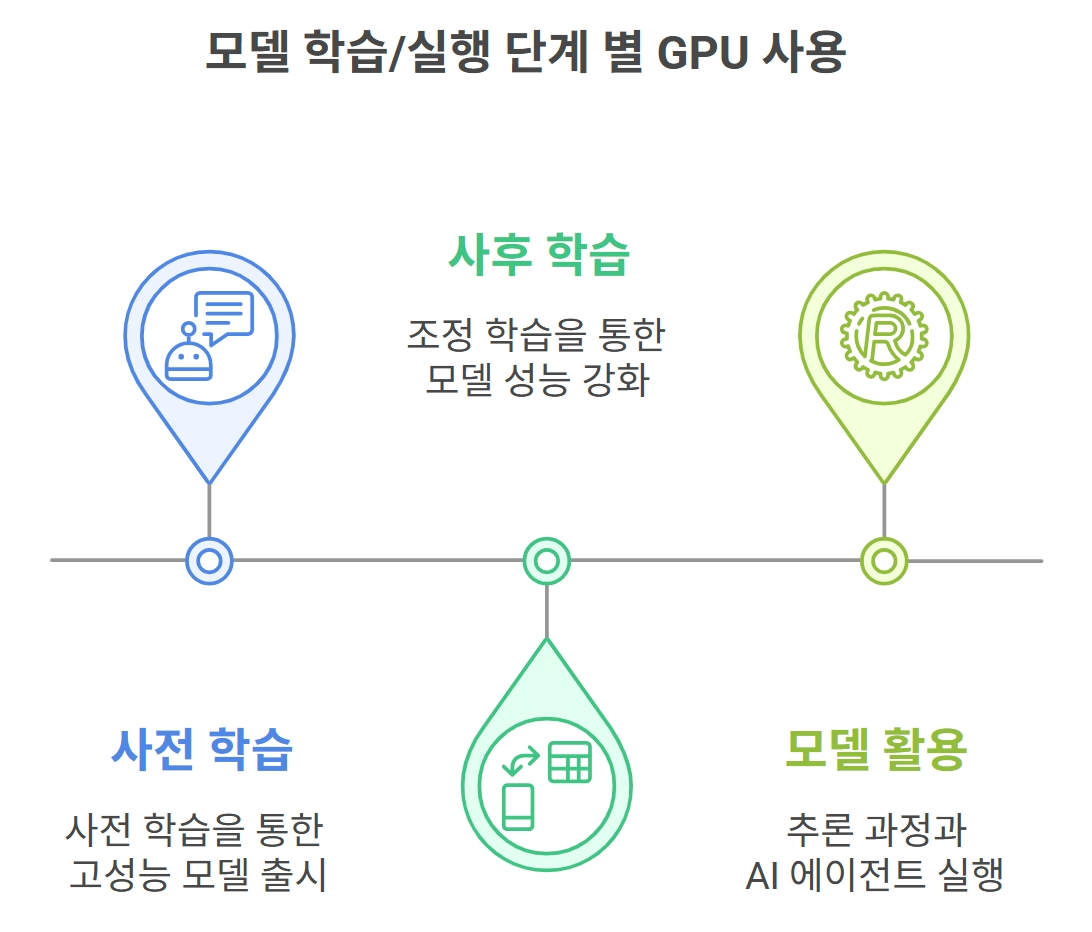

GPUs remain crucial in AI development, with their usage expected to increase. The submission of GPUs spans three key stages:

- Pre-Training: Initial model learning using extensive GPU resources and vast datasets.

- Post-Training: Refining model performance through adjustment learning.

- Model Deployment: Inference and AI agent execution.

Details

- Preliminary learning: Release of high -performance models through prior learning

- Post -learning: Strengthening model performance through adjustment learning

- Model Use: Inference process and AI agent execution

The initial “Pre-Train” stage involves developing high-performance models using significant GPU resources and extensive knowledge data, exemplified by the release of ChatGPT in 2022. Since early 2024, companies have been enhancing AI model performance through distributed learning, utilizing more data and GPUs.

The second stage,”Post-Train,” focuses on improving performance through “adjustment learning,” enhancing real-world application and reasoning abilities. The R1, an AI reasoning model introduced by China’s Deep Chic in 2025, demonstrated excellent performance using relatively small GPUs based on open-source models. This has spurred the development of various reasoning models and the proliferation of Agentic AI.

The final stage involves deploying the learned model to solve problems, requiring significant computing power for real-time data processing and decision-making in “Agent Serving/Run.” Therefore, GPU utilization for performance enhancement will continue to expand across all stages.

Shifting Strategies of AI Companies

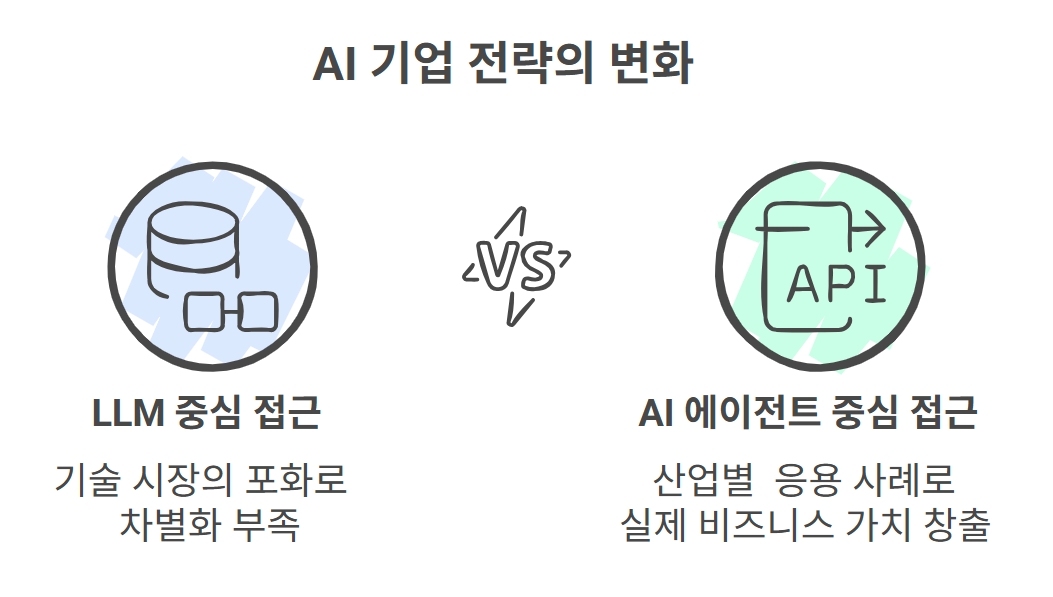

AI companies are increasingly shifting their focus from developing large language models (LLMs) to leveraging existing models for “AI agent” and “application development.” This shift is driven by market saturation and the need for differentiation. Rather of focusing solely on model development, major players like OpenAI, Google, and Anthropic are prioritizing effective problem-solving using existing models. The significant capital invested in AI as 2022 necessitates the creation of real business value through AI agents and industry-specific applications.

Details

- Changes in AI company strategy

- LLM -centric approach (lack of differentiation due to saturation of the technology market) VS AI agent -oriented approach (actual business value as an industrial application case)

Defining the “Agent” in AI

The definition of ”agent” varies across companies. OpenAI describes agents as entities that perform autonomous actions on behalf of users, LLM services with guidelines and tools, or assistants. Microsoft defines them as new AI-based applications with specific expertise, while companies like Anthropic and Salesforce view agents broadly, encompassing tasks from simple repetitive work to complex autonomous operations. this lack of standardization, coupled with the mixing of technical elements and architectural aspects, highlights the need for clear definitions and standards through global standardization efforts, similar to the establishment of the HTTP protocol for web technologies.

Agentic AI: Diverse Applications Emerge

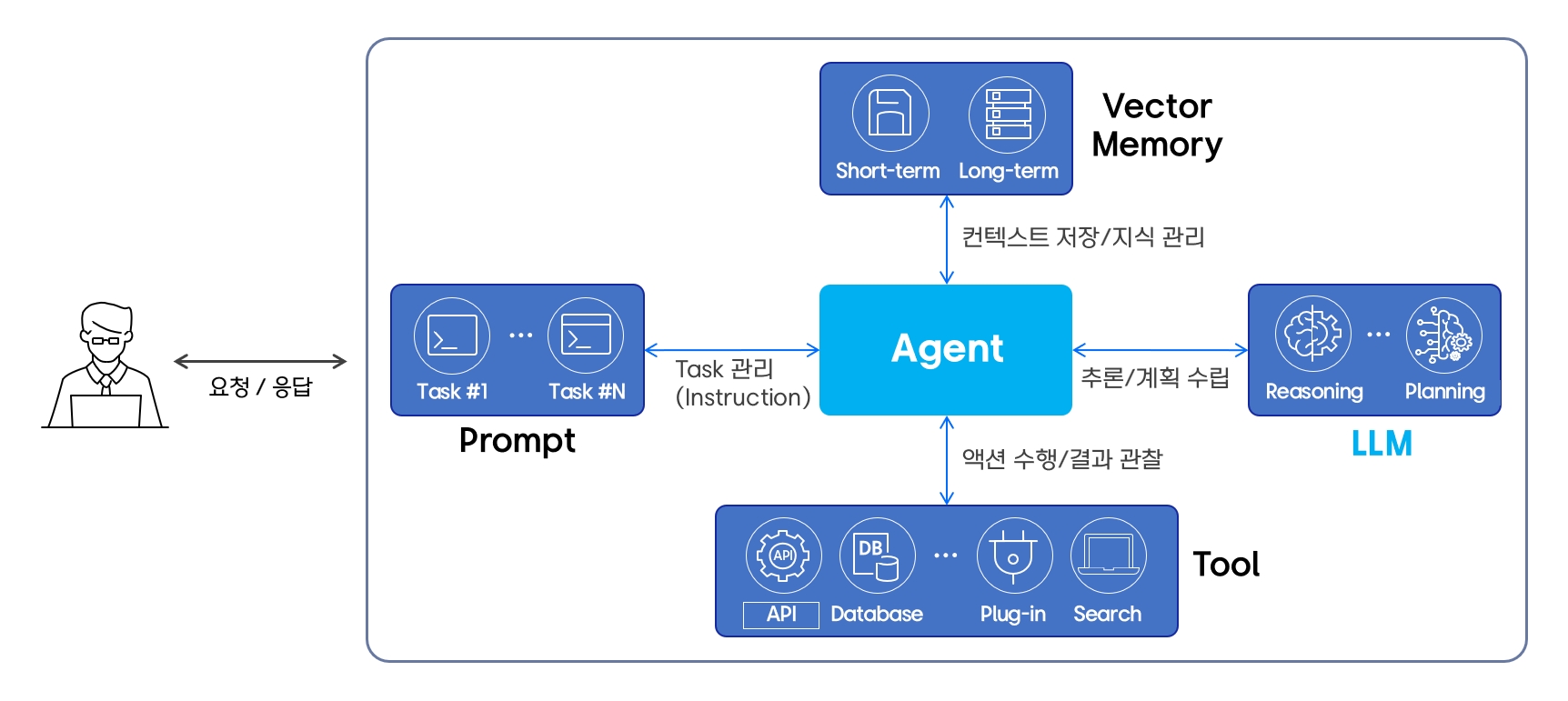

Agentic AI acts as a “agent,” performing specific tasks on behalf of users. An “Agentic System” is an execution environment where agents perceive, infer, act, and analyze results to achieve goals. This system comprises several components: a “Large Language Model (LLM)” for reasoning and planning, “vector memory” for managing short-term and long-term information, and “tools” such as API calls, database searches, and internet searches.

Details

User ← Request/Response → Agent

Agent

- prompt(Task #1, Task#N) ← Task 관리(Instruction) → Agent

- Vecotr Memory (Short-Term, Long-Term) ← Context Save/Knowledge Management → Agent

- LLM (Reasoning, Planning) ← Inference/Planning → Agent

- Tool (API, Database, Plug-in, Search) ← action/Observation → Agent

Various Agentic AI applications are under development. OpenAI’s “Deep Research” creates researcher-level reports by investigating and analyzing web searches and papers. This reasoning model achieved a 26.6% success rate on the “HLE (Humanity’s Last EXAM)” test. Another example, OpenAI’s “Operator,” performs web-based tasks using a computer, handling online orders or data management through mouse clicks or keyboard typing. However, the performance of these agents is still improving, necessitating selective use.

“Automatic prediction” in risk management and operation analyzes abnormal variations, predicts outcomes, and autonomously adjusts, outperforming existing rule-based or machine learning (ML) systems. These agents are tuned to suit detection and time series analysis, automating various tasks through prompt engineering.

Customer service call center agents recommend answers in real-time and summarize consultation contents or automatically create FAQs. Executing these agents requires converting speech to text (STT), connecting with counseling system guidelines or customer databases, and leveraging LLMs based on user feedback.

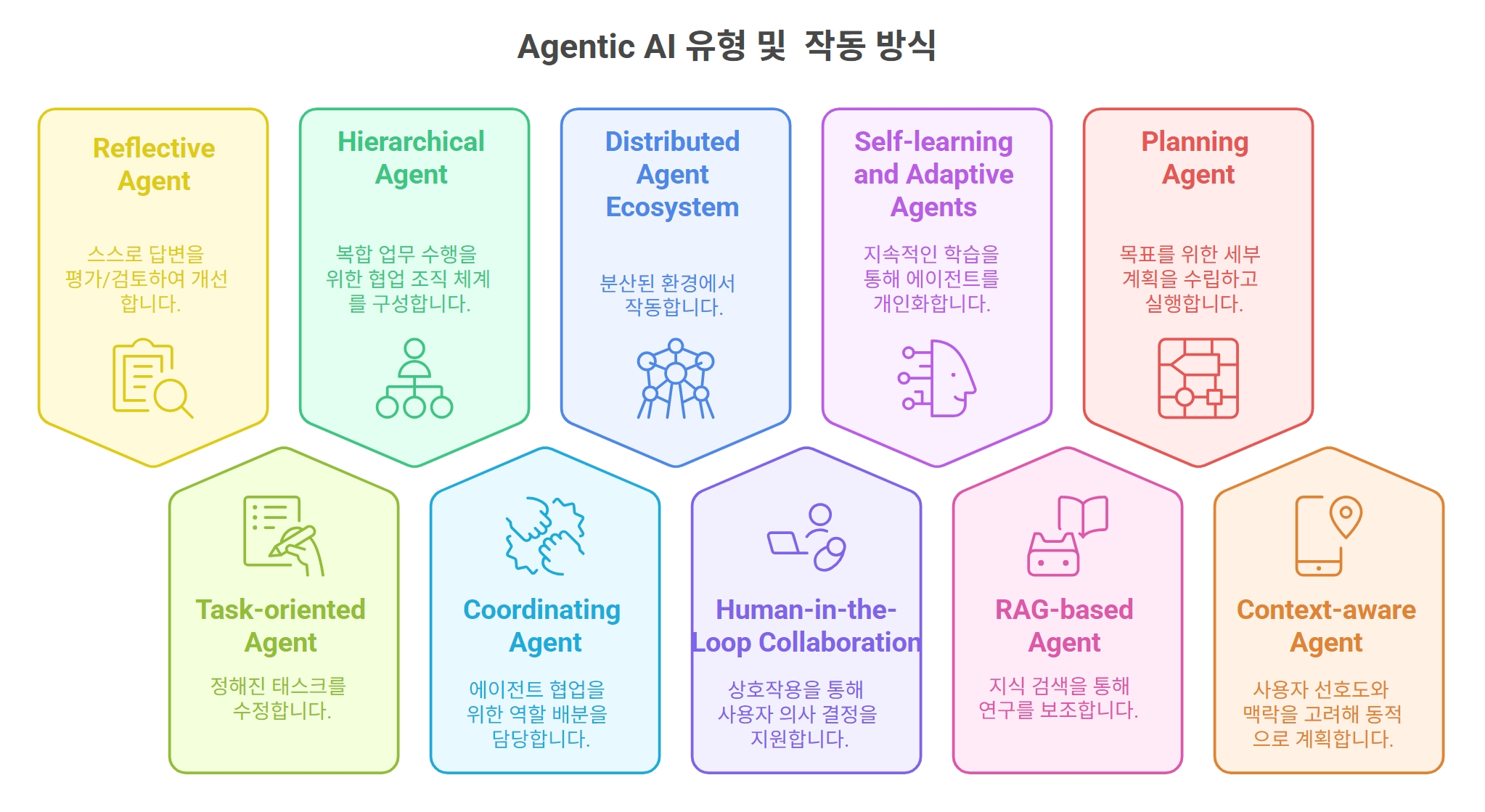

Agentic AI Types and Optimal Choices

Agentic AI can operate independently or in multi-agent systems to handle complex tasks. In multi-agent systems, a leader agent analyzes user goals, divides them into smaller tasks, distributes them to other agents, and collects the results. these systems can connect tasks sequentially or assign them to all agents simultaneously. The key is to select a structure that accurately identifies and solves the problem effectively.

Agent RAG (Retrieval-Augmented Generation) improves RAG performance by analyzing queries, creating answers, and evaluating their usefulness through step-by-step analysis, review, modification, and supplementary processes, significantly improving accuracy.

The Open World Wide Application Security Project (OWASP) analyzes various Agentic AI structures to address security threats, including “Reflective Agents” that evaluate and review responses, “Task-Oriented Agents” that perform specific reservations, “Hierarchical Agents” with collaborative organization systems, “Distributed Agent Ecosystems” operating in distributed environments, and “Human-in-Loop Collaboration” where users participate in decision-making.

Details

- Reflective Agent -Evaluate and review the response and improve it.

- Hierarchical Agent -Configure a collaboration organization system for complex work.

- Distributed Agent Ecosystem -It works in a distributed environment.

- SELF -Learning and Adaptive Agents -Personalizing the agent through continuous learning.

- Planning Agent -Establish and execute detailed plans for goals.

- task -Oriented Agent -Modify the fixed task.

- Coordination Agent -It is responsible for distribution of roles for agent collaboration.

- Human-in-in-Loop Collaboration-Support user decision through interaction.

- Rag -Based Agent -Aid through knowledge search.

- Context -Aware Agent -dynamically planned in consideration of user preference and context.

Developing a Company’s AI Strategy

Given the rapid development and diverse applications of AI, companies need a systematic approach to successfully introduce AI. This requires enterprise-wide AI application driven by management commitment, data system maintenance, and complex systems.

AI Agents Demand Strong Data, Security Focus for Business Transformation

As businesses increasingly adopt AI agents to streamline operations, experts emphasize the critical need for robust data management, stringent security protocols, and continuous evaluation to maximize effectiveness and minimize risks.

Optimizing Business Processes with AI: A Multi-Faceted Approach

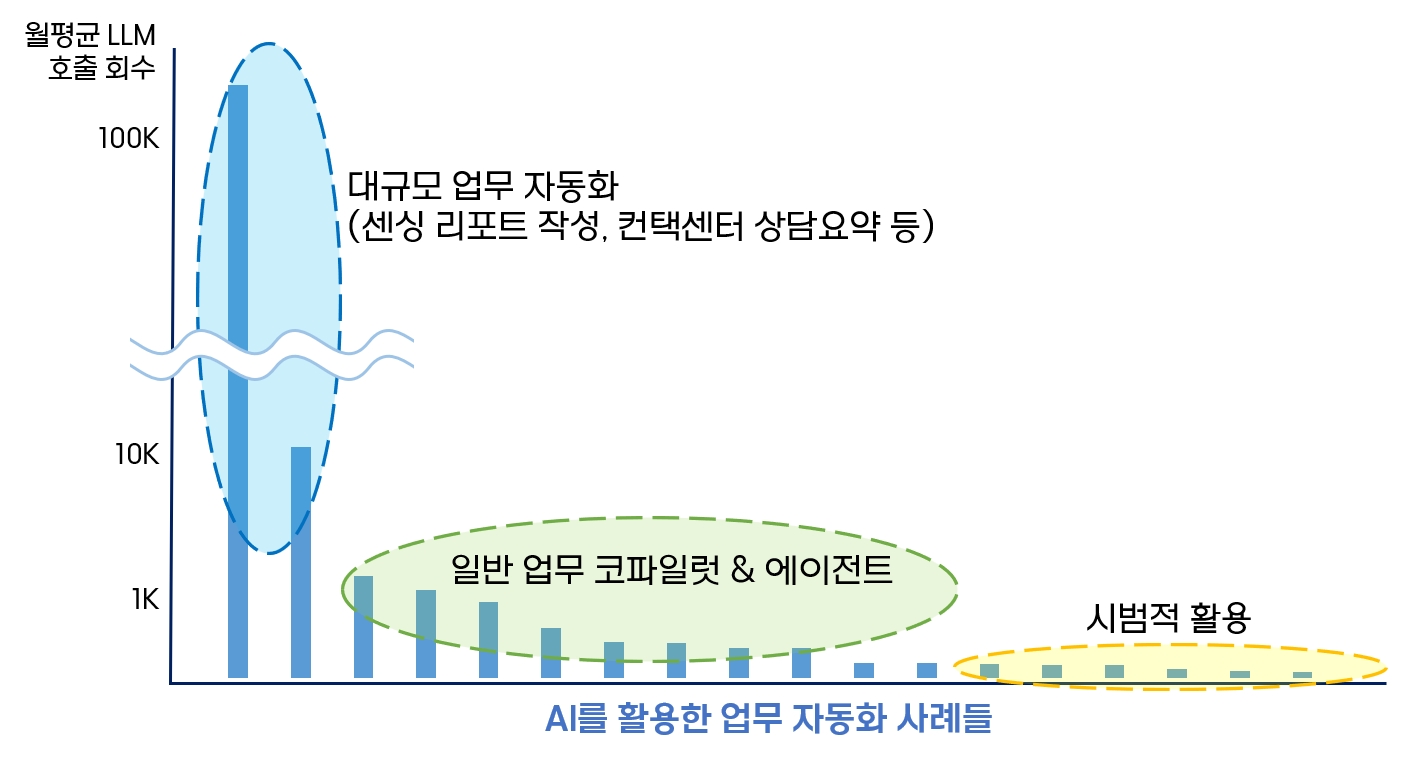

Introducing AI across an organization can yield improvements in various applications, from portal chat services to business solution file management and complex process automation via agent design. The frequency of Large Language Model (LLM) calls and the complexity of tasks vary, requiring a tailored approach based on specific work characteristics.

Tasks involving repetitive actions, such as report generation and contact center consultations, benefit significantly from frequent AI calls. Though, accomplished AI implementation hinges on connecting diverse systems and data sources. Integrating new technologies often necessitates organizing system information, accumulating quality data, and enabling API access to internal systems. This holistic approach facilitates complete business automation and efficiency gains.

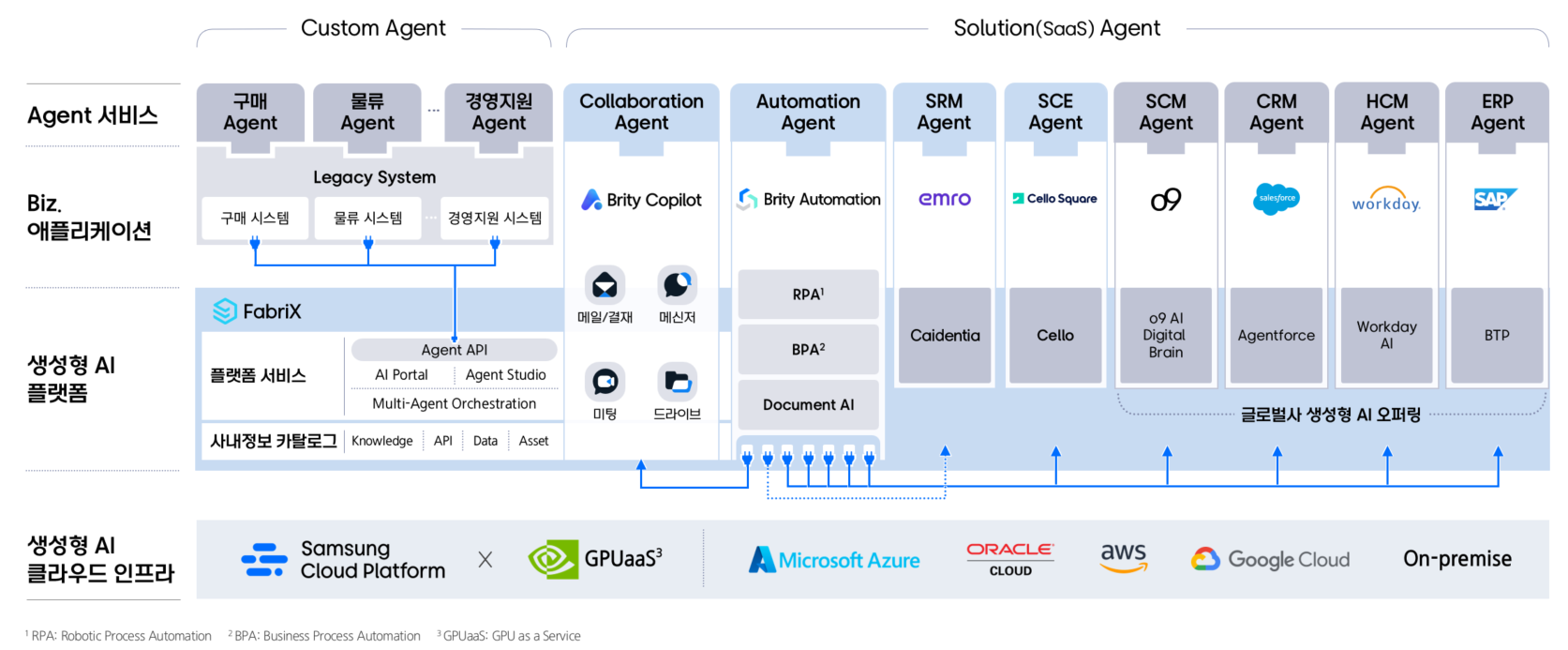

System Connectivity: Expanding AI’s Reach and Impact

A company’s IT environment comprises infrastructure, platforms, applications, and services. Connecting these elements to AI agents can significantly streamline workflows. While solutions like ERP,SCM,and HCM are developing their own agents,effective integration requires data and system-level connections. Creating data catalogs and enabling API linkages are crucial steps, especially in a rapidly evolving technological landscape.

However, improper design during system integration can lead to increased complexity, mirroring the issues of legacy systems.Technical standardization and the creation of adaptable systems are vital for responding effectively to new changes.

Security Imperatives in the Age of AI Agents

The introduction of AI agents necessitates heightened security awareness. As AI agents autonomously access various systems and data to solve problems, security risks inevitably increase. The Open Web application Security Project (OWASP) is modeling new security threats that span agents, services, models, databases, and applications.

Protecting individual contact points is insufficient; a comprehensive, multi-directional security system built on threat modeling is essential. Even with existing security and access management systems, organizations must strengthen security measures, including safe memory management, access control, and user inspection. A systematic approach is crucial to address potential vulnerabilities when introducing new AI use cases.

The Evolving Role of Humans in AI-driven Environments

As AI takes on more tasks, the role of humans becomes increasingly significant. Given that AI is not infallible, human oversight is necessary to validate the agent’s autonomous problem-solving and decision-making processes. Studies suggest that knowledge workers’ trust in AI and their self-confidence influence their acceptance of AI-generated results.

Over-reliance on AI without proper inspection can lead to compromised professionalism. Therefore, individuals must focus on critically evaluating AI outputs, requiring enhanced critical thinking skills and domain expertise. Application UI/UX design should encourage critical review rather than unconscious acceptance of AI results.

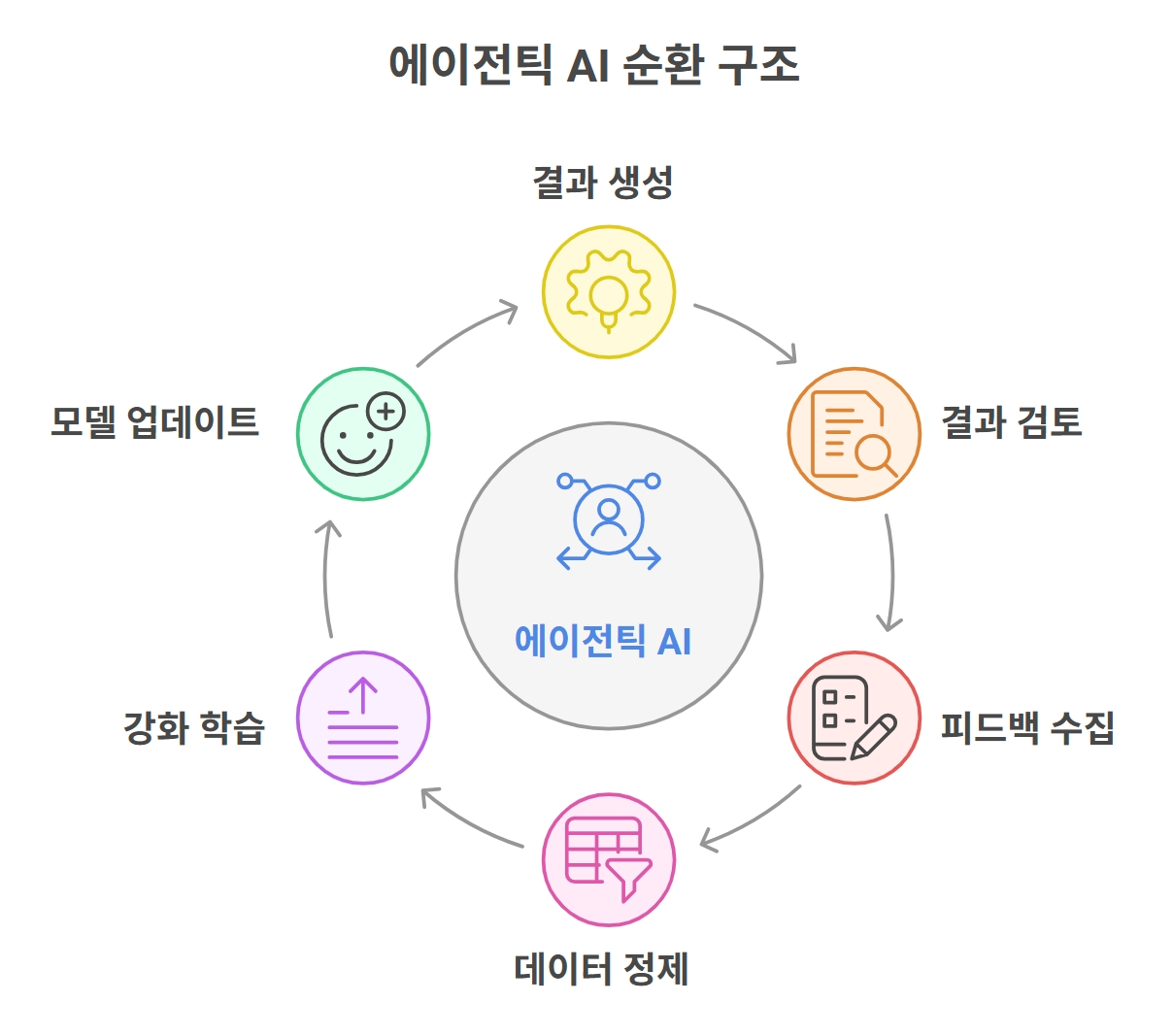

AI agent systems operate cyclically: agents generate results, which are reviewed by human experts. this feedback data is then used to refine the model, creating a virtuous cycle of continuous betterment. Even with initial accuracy rates as low as 60%, consistent data-driven improvements can address the remaining shortcomings. Without this evaluation and refinement process,AI implementation efforts might potentially be wasted.

Data-Driven Innovation in the Agent AI Era

AI technology is in constant flux. Digital transformation has progressed from automating simple tasks via Robotic Process Automation (RPA) to Hyper-Automation and, now, the era of Agent AI. This evolution is driving towards Artificial General Intelligence (AGI) and superintelligence. While predictions vary on when AGI will arrive, the focus should remain on AI’s core purpose: problem-solving.

To leverage AI effectively, companies must define the problems thay aim to solve, grounding these definitions and evaluations in data. While technology evolves, data remains a constant asset. Thus, organizations must prioritize establishing robust data systems and standards, with leadership playing a crucial role. Achieving successful innovation in the Agent AI era requires a concerted effort to build data-driven problem definition and evaluation systems.

Agentic AI: Transforming Industries and Redefining Business Strategies

The tech landscape is rapidly evolving, and at the forefront of this change is Agentic AI. At CES 2025, NVIDIA CEO Jensen Huang highlighted the transition from AI that generates images, text, and sound to AI that can infer, plan, and execute tasks independently. This evolution further expands to “Physical AI,” marking a meaningful shift in how we understand and utilize artificial intelligence.

From Generative to Agentic: Understanding the Evolution of AI

agentic AI represents a leap forward from it’s generative predecessor. Unlike generative AI, which excels at content creation based on user input, Agentic AI is designed to autonomously solve complex problems. A february 2025 Forbes article encapsulates this difference, positioning Agentic AI as “The Autonomous Problem-Solver,” while recognizing generative AI as “The Creative Powerhouse.”

The integration of these two AI types opens up exciting possibilities across multiple sectors. Manufacturing, such as, can leverage Agentic AI for process optimization and execution planning, while generative AI creates reports or visualizes simulation outcomes.

details

- Generative AI

- creates content based on learned data according to instructions.

- Agentic AI

- Solves complex problems effectively with autonomous plans and execution.

This innovative capability is driving the expansion of AI applications in diverse fields like:

Manufacturing: Agentic AI, integrated with industrial IoT data, can detect anomalies and predict maintenance needs.

Service Sector: Enables interactive self-service through knowledge bases, resolving issues like account access and password resets.

Logistics: Benefits from physical agents, combining computer vision and robotics to automate goods identification, classification, and packaging.

Environmental Sector: Leverages AI platforms to predict and manage carbon emissions and promote sustainability.

GPU Utilization in AI Progress: A Critical Component

GPUs remain crucial for AI development, and their usage is anticipated to increase across three primary stages:

- Pre-Training: The initial stage of model learning that utilizes extensive GPU resources and vast datasets.

- Post-Training: Refining model performance through adjustment learning.

- Model Deployment: The execution of inference and AI agent tasks.

Details

The Pre-Train stage, exemplified by the development of ChatGPT in 2022, involves creating high-performance models using significant GPU resources and extensive datasets. Since early 2024, companies have been enhancing AI model performance through distributed learning, leveraging greater amounts of data and GPUs.

Post-Training focuses on enhancing performance through “adjustment learning,” improving real-world application and reasoning capabilities. The R1, an AI reasoning model introduced by china’s Deep Chic in 2025, demonstrated exceptional performance using relatively small GPUs based on open-source models. This has spurred the development of various reasoning models and the proliferation of agentic AI.

The final stage involves deploying the trained model to solve problems, requiring significant computing power for real-time data processing and decision-making in “Agent Serving/Run.” Therefore, GPU utilization for performance enhancement will continue to expand across all stages.

Evolving Strategies in the AI landscape

The dynamic nature of the AI landscape requires companies to adapt their strategies. The shift toward Agentic AI underscores the need for robust infrastructure, efficient data management, and a security-focused approach to fully leverage the transformative potential of this technology.