Nvidia Rubin: Rack-Scale Encryption Transforms Enterprise AI Security

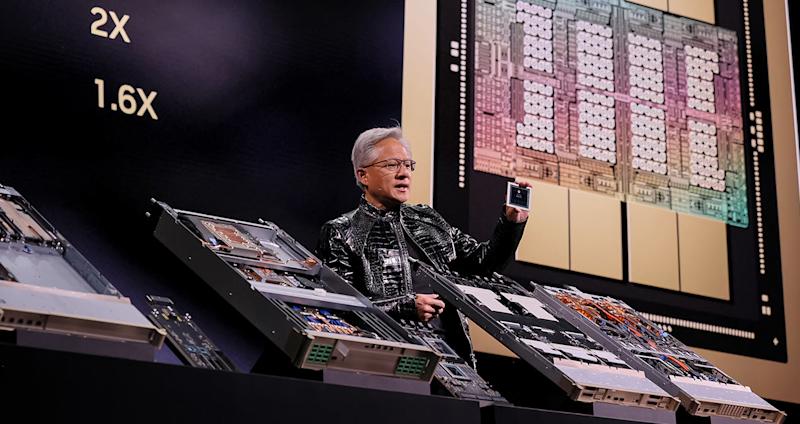

- Nvidia's Vera Rubin NVL72, announced at CES 2026, encrypts every bus across 72 GPUs, 36 CPUs, and the entire NVLink fabric.

- For security leaders,this fundamentally shifts the conversation.

- Epoch AI research shows frontier training costs have grown at 2.4x annually since 2016, which means billion-dollar training runs could be a reality within a few short years.

“`html

Nvidia’s Vera Rubin NVL72, announced at CES 2026, encrypts every bus across 72 GPUs, 36 CPUs, and the entire NVLink fabric. It’s the first rack-scale platform to deliver confidential computing across CPU,GPU,and NVLink domains.

For security leaders,this fundamentally shifts the conversation. Rather than attempting to secure complex hybrid cloud configurations through contractual trust with cloud providers, they can verify them cryptographically. That’s a critical distinction that matters when nation-state adversaries have proven they are capable of launching targeted cyberattacks at machine speed.

The brutal economics of unprotected AI

Epoch AI research shows frontier training costs have grown at 2.4x annually since 2016, which means billion-dollar training runs could be a reality within a few short years. Yet the infrastructure protecting these investments remains fundamentally insecure in moast deployments. Security budgets created to protect frontier training models aren’t keeping up with the exceptionally fast pace of model training. The result is that more models are under threat as existing approaches can’t scale and keep up with adversaries’ tradecraft.

IBM’s 2025 Cost of Data Breach Report found that 13% of organizations experienced breaches of AI models or applications.Among those breached, 97% lacked proper AI access controls.

Shadow AI incidents cost $4.63 million on average, or $670,000 more than standard breaches, with one in five breaches now involving unsanctioned tools that disproportionately expose customer PII (65%) and intellectual property (40%).

Think about what this means for organizations spending $50 million or $500 million on a training run. Their model weights sit in multi-tenant environments where cloud providers can inspect the data. Hardware-level encryption that proves the habitat hasn’t been tampered with changes that financial equation entirely.

The GTG-1002 wake-up call

In November 2025, Anthropic disclosed something unprecedented: A Chinese state-sponsored group designated GTG-1002 had manipulated Claude Code to conduct what the company described as the first documented case of a large-scale cyberattack executed without significant human intervention.

State-sponsored adversaries turned it into an autonomous intrusion agent that discovered vulnerabilities, crafted exploits, harvested credentials, moved laterally through networks, and categorized stolen data by intelligence value. Human operators stepped in only at critical junctures. According to Anthropic’s analysis, the AI executed around 80 to 90% of all tactical work independently.

The implications extend beyond this single incident. Attack surfaces that once required teams of experienced attackers can now be probed at machine speed by opponents with access to foundation models.

Comparing the performance of Blackwell vs. Rubin

|

Specification |

Blackwell GB300 NVL72 |

Rubin NVL72 |

|

Inference compute (FP4) |

1.44 exaFLOPS |

3.6 exaFLOPS |

|

NVFP4 per GPU (inference) |

20 PFLOPS |