OCI Services and Open-Source Tools Mapping Guide

- Oracle Cloud Infrastructure (OCI) is advancing its support for machine learning operations (MLOps) through a new guide that details how its services and open-source tools can be used...

- The guide emphasizes the integration of OCI’s native services with widely adopted open-source tools to streamline workflows from data preparation and model training to validation, serving, and monitoring.

- Key components highlighted in the guide include OCI Data Science for collaborative model development, OCI Functions for serverless model serving, and OCI Streaming for real-time data ingestion.

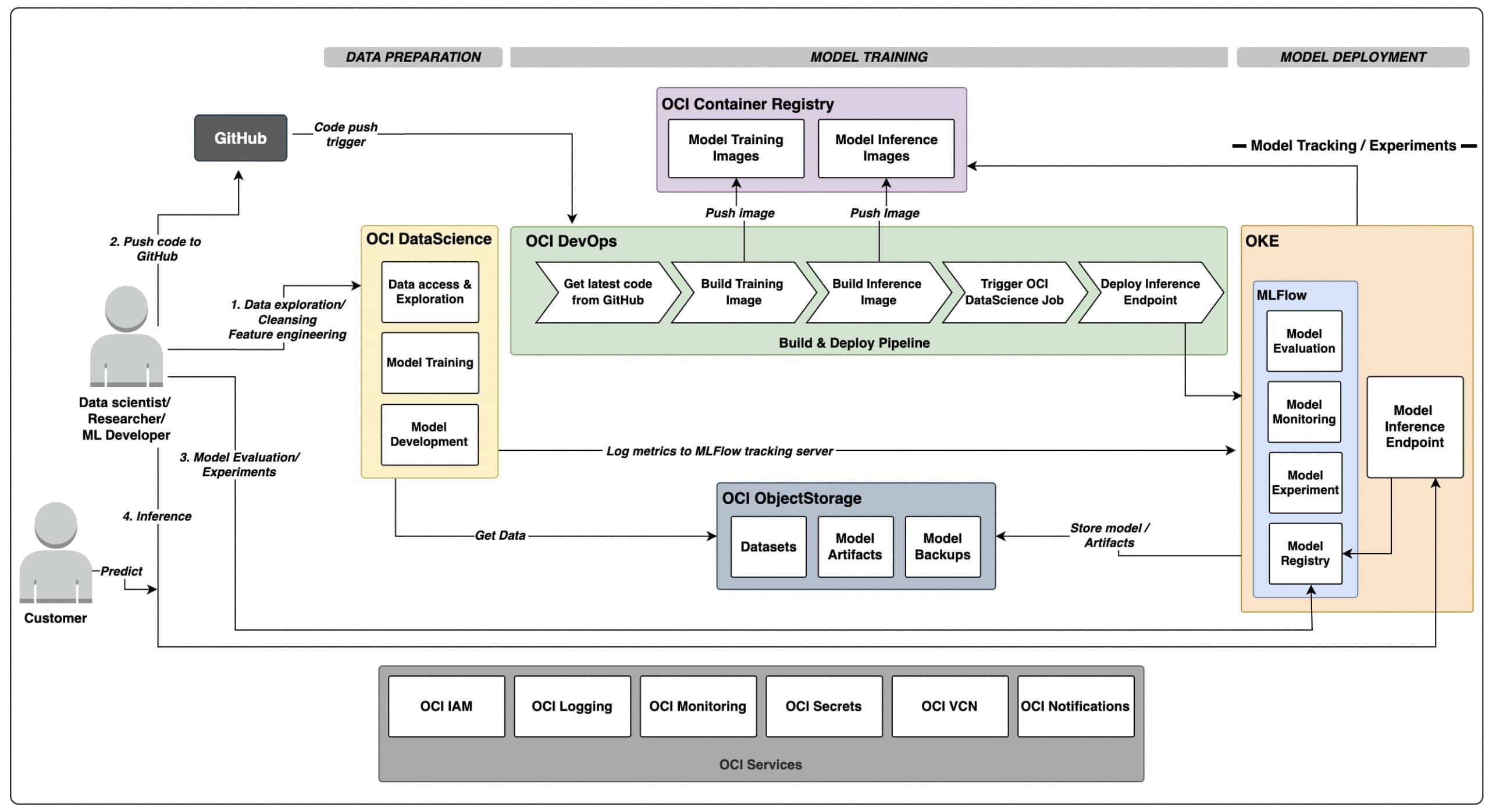

Oracle Cloud Infrastructure (OCI) is advancing its support for machine learning operations (MLOps) through a new guide that details how its services and open-source tools can be used to build, train, and deploy machine learning models with confidence. The resource, published on the Oracle Cloud Infrastructure blog, outlines a practical approach to MLOps that aligns with industry best practices for managing the full lifecycle of machine learning models.

The guide emphasizes the integration of OCI’s native services with widely adopted open-source tools to streamline workflows from data preparation and model training to validation, serving, and monitoring. It positions this combination as a way to reduce complexity and improve reliability in machine learning projects, particularly for teams adopting DevOps principles.

Key components highlighted in the guide include OCI Data Science for collaborative model development, OCI Functions for serverless model serving, and OCI Streaming for real-time data ingestion. These services are paired with open-source frameworks such as MLflow for experiment tracking and model registry, and Kubeflow for orchestrating machine learning pipelines on Kubernetes.

The approach supports automation and repeatability, enabling teams to maintain consistency across development, testing, and production environments. By leveraging infrastructure-as-code and continuous integration/continuous deployment (CI/CD) practices, the guide suggests that organizations can accelerate model delivery while maintaining governance and security standards.

OCI’s commitment to MLOps is further demonstrated through its support for the OCI Service Operator for Kubernetes (OSOK), which allows Kubernetes users to manage OCI resources directly through the Kubernetes API. This integration reduces reliance on command-line interfaces or proprietary developer tools, enabling more seamless operations within cloud-native environments.

Security and monitoring are also addressed in the guide, with recommendations to use OCI Identity and Access Management (IAM) for secure access control and OCI Monitoring and Logging services to track model performance and system health. These capabilities help ensure that deployed models operate reliably and comply with organizational policies.

The guide reflects a broader trend in cloud computing where providers are offering integrated toolchains that combine proprietary services with open-source technologies to meet the evolving needs of data science and engineering teams. By focusing on practical implementation rather than theoretical concepts, Oracle aims to provide a clear path for enterprises looking to scale their machine learning initiatives.

As machine learning becomes increasingly central to business operations across industries, resources like this guide play a role in helping organizations navigate the technical and operational challenges of deploying models at scale. The emphasis on verified, reproducible workflows underscores the importance of treating machine learning systems as critical infrastructure that requires the same rigor as traditional software systems.