Qwen2.5 Dethrones Meta: The Unstoppable Rise of the World’s Top Open-Source Large Model

Alibaba Cloud Unveils Qwen2.5, the World’s Largest Open-Source Model

At the Yunqi Conference on September 19, Alibaba Cloud CTO Zhou Jingren released the new generation of open-source models Qwen2.5 of Tongyi Qianwen. The flagship model Qwen2.5-72B surpassed Llama 405B in performance and once again became the world’s largest open-source model.

The full series of Qwen2.5 covers large language models, multimodal models, mathematical models, and code models of multiple sizes. Each size has a basic version, an instruction-following version, and a quantized version. A total of more than 100 models are available, setting a new industry record.

Improved Performance and Capabilities

All Qwen2.5 models are pre-trained on 18T tokens data. Compared with Qwen2, the overall performance is improved by more than 18%, with more knowledge, stronger programming and mathematical capabilities. The Qwen2.5-72B model scores as high as 86.8, 88.2, and 83.1 on the MMLU-rudex benchmark (testing general knowledge), MBPP benchmark (testing coding ability), and MATH benchmark (testing mathematical ability).

Qwen2.5 supports context lengths up to 128K and can generate up to 8K content. The model has strong multilingual capabilities and supports more than 29 languages, including Chinese, English, French, Spanish, Russian, Japanese, Vietnamese, Arabic, etc. The model can smoothly respond to a variety of system prompts and implement tasks such as role-playing and chatbots.

Language Models and Specialized Models

In terms of language models, Qwen2.5 has open-sourced 7 sizes: 0.5B, 1.5B, 3B, 7B, 14B, 32B, and 72B. They have achieved the best results in the industry in the same parameter track. The model setting fully considers the different needs of downstream scenarios.

72B is the flagship model of the Qwen2.5 series. Its instruction follower version Qwen2.5-72B-Instruct has performed well in authoritative evaluations such as MMLU-redux, MATH, MBPP, LiveCodeBench, Arena-Hard, AlignBench, MT-Bench, and MultiPL-E.

In terms of specialized models, Qwen2.5-Coder for programming and Qwen2.5-Math for mathematics have made substantial progress over their predecessors. Qwen2.5-Coder was trained on up to 5.5T tokens of programming-related data, and the 1.5B and 7B versions were open-sourced on the same day, and the 32B version will be open-sourced in the future.

Multimodal Models

The much-anticipated visual language model Qwen2-VL-72B has been officially open-sourced. Qwen2-VL can recognize images of different resolutions and aspect ratios, understand videos longer than 20 minutes, and has the ability to autonomously operate mobile phones and robots as a visual agent.

Recently, the authoritative evaluation LMSYS Chatbot Arena Leaderboard released the latest visual model performance evaluation results, and Qwen2-VL-72B became the world’s highest-scoring open-source model.

Ecological Network and Downloads

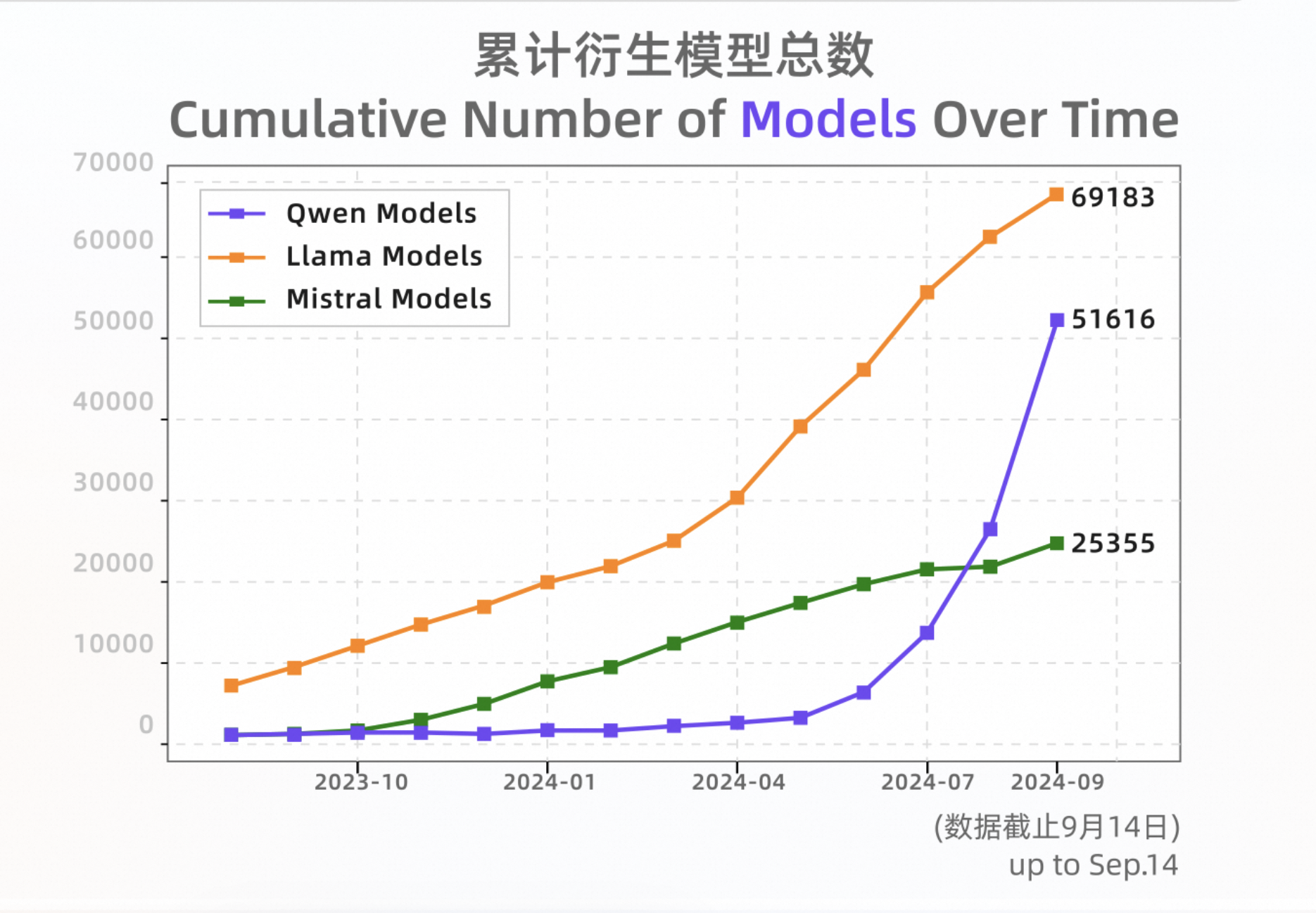

Since its open-source release in August 2023, Tongyi has become the preferred model for developers, especially Chinese developers, in the field of global open-source big models. In terms of performance, Tongyi’s big models have gradually surpassed the strongest open-source model in the United States, Llama, and have topped the Hugging Face global big model list many times.

As of mid-September 2024, the download volume of Tongyi Qianwen open-source models exceeded 40 million, and the total number of Qwen series derivative models exceeded 50,000, becoming a world-class model group second only to Llama.