Revolutionizing Video Understanding: MMBench Unveils Groundbreaking Benchmark for Medium and Long-Form Videos

![]() Baijiao

Baijiao

2024-10-30 16:56:16 Source: Qubits

From Zhejiang University in conjunction with Shanghai Artificial Intelligence Laboratory, Shanghai Jiao Tong University and the Chinese University of Hong Kong

The April conference of GPT-4o set off a craze for video understanding, and open source leader Qwen2 also shows no mercy on video, showing off its muscles on various video evaluation benchmarks.

However, most of the current evaluation benchmarks still have the following shortcomings:

- Focus more on short videosthe video length or the number of video shots is insufficient, making it difficult to examine the model’s long-term understanding ability;

- The examination of the model is limited to some relatively simple tasks.More fine-grained capabilities not covered by most benchmarks;

- Existing benchmarks can still achieve high scores based on single-frame images, indicatingThe temporal relationship between the questions and the pictures is not strong;

- Evaluation of open questions still uses the older GPT-3.5the scoring has a large deviation from human preference and is not accurate, and it is easy to overestimate the model performance.

In response to these problems, are there any corresponding benchmarks that can better solve these problems?

In the latest NeurIPS D&B 2024, MMBench-Video, proposed by Zhejiang University, Shanghai Artificial Intelligence Laboratory, Shanghai Jiao Tong University and the Chinese University of Hong Kong, created a comprehensive open video understanding evaluation benchmark and built an open source for the current mainstream MLLM. Video comprehension ability assessment list.

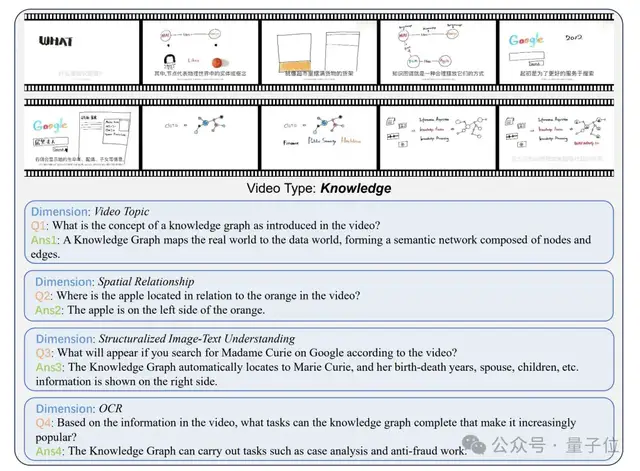

MMBench-Video, the video understanding evaluation benchmark, adopts fully manual annotation. It has undergone primary annotation and secondary quality verification. The video types are rich and of high quality. The Q&A covers comprehensive model capabilities. Answering questions accurately requires the extraction of information across the time dimension. More It is a good test of the model’s timing understanding ability.

Compared with other data sets, MMBench-Video has the following outstanding features:

The video duration spans a wide range and the number of shots is variable.: The length of the collected videos ranges from 30 seconds to 6 minutes, which avoids problems such as too short videos with simple semantic information and excessive resource consumption caused by too long video evaluations. At the same time, the number of shots covered by the video is generally distributed in a long-tail distribution. A video can have up to 210 shots, which contains rich scene and contextual information.

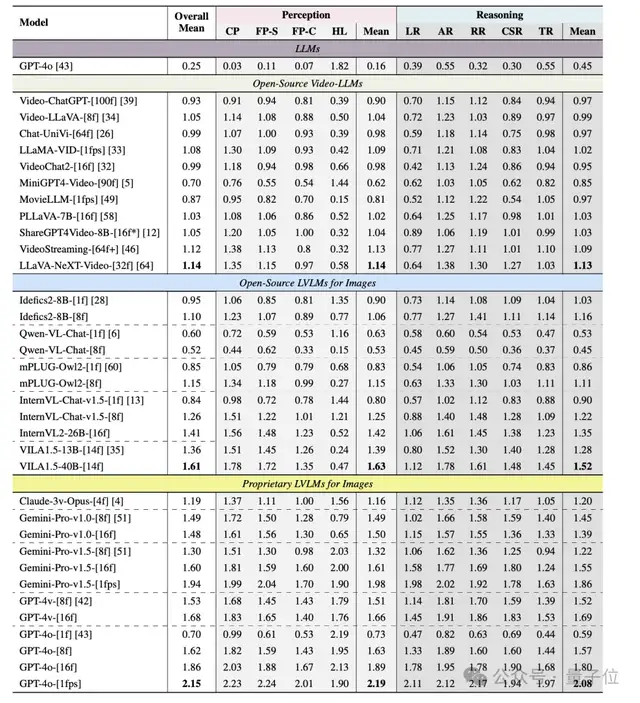

An all-round ability test, a comprehensive challenge in perception and reasoning: The model’s video understanding ability mainly includesPerceptionandreasoningThere are two parts, and the capabilities of each part can be further refined. Inspired by MMBench and combined with the specific abilities involved in video understanding, the researchers established a comprehensive ability pedigree containing 26 fine-grained abilities. Each fine-grained ability is evaluated with dozens to hundreds of question and answer pairs, and Not a collection of existing tasks.

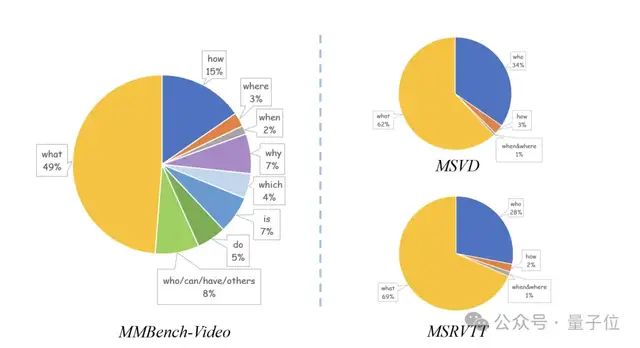

Rich video types and strong language diversity of Q&A: Covering 16 major fields such as humanities, sports, science and education, food, and finance, videos in each field account for more than 5%. At the same time, the question and answer pairs have further improved length and semantic richness compared to the traditional VideoQA data set, and are not limited to simple question types such as ‘what’ and ‘when’.

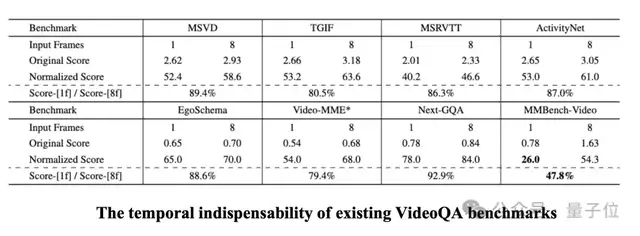

Good timing independence and high annotation quality: In the research, it was found that most VideoQA data sets can obtain sufficient information through only 1 frame in the video to provide accurate answers. This may be due to the small changes in the front and back pictures in the video, the small number of video shots, or the low quality of the question and answer pairs. Researchers refer to this situation as the poor temporal independence of the data set. Compared with them, MMBench-Video has significantly lower timing independence because it provides detailed rule restrictions when annotating, and the question and answer pairs have been verified twice, and can better examine the timing understanding ability of the model.

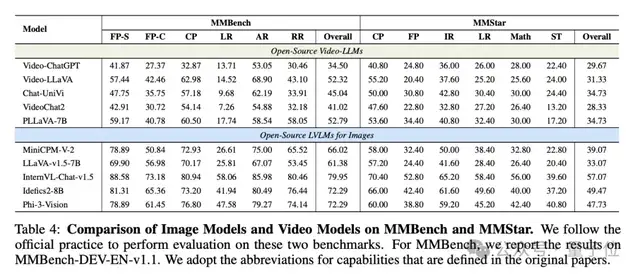

In order to more comprehensively evaluate the video understanding performance of multiple models, MMBench-Video selected 11 representative video language models, 6 open source multi-modal graphics and text models, and 5 closed-source models such as GPT-4o for comprehensive experiments. analyze.

Among all models, GPT-4o performed outstandingly in video understanding, while Gemini-Pro-v1.5 also demonstrated outstanding model performance.

Surprisingly, the existing open source multi-modal graphics and text models perform better overall on MMBench-Video than the video language model fine-tuned through video-question and answer pairs. The optimal graphics and text model VILA1.5 has an overall performance of It exceeds the optimal video model LLaVA-NeXT-Video by nearly 40%.

After further exploration, it was found that the reason why graphic and text models perform better in video understanding may be attributed to their stronger ability to refine and process static visual information, while the video language model has better performance in perception and reasoning for static images. All have shortcomings, which makes them unable to cope with more complex temporal reasoning and dynamic scenes.

This difference revealsExisting video models have significant deficiencies in spatial and temporal understanding.especially when processing long video content, its temporal reasoning capabilities need to be improved urgently. also,graphic modelThe performance improvements in inference with multi-frame inputs show that theyHas the potential to further expand into the field of video understandingwhile video models need to strengthen learning on a wider range of tasks to bridge this gap.

Video length and number of shots are considered key factors affecting model performance。

Experimental results show that as the length of the video increases, the performance of GPT-4o under multi-frame input declines, while the performance of open source models such as InternVL-Chat-v1.5 and Video-LLaVA is relatively stable. Compared to video length,The number of lenses has a more significant impact on model performance。

When the video footage exceeds 50, the performance of GPT-4o drops to 75% of the original score. This suggests that frequent camera switching makes it more difficult for the model to understand the video content, causing its performance to degrade.

In addition, MMBench-Video also uses the interface to obtain the subtitle information of the video, thereby introducing the audio modality through text.

After its introduction, the model’s performance in video understanding has been significantly improved. When audio signals are combined with visual signals, the model can answer complex questions more accurately. This experimental result shows that the addition of subtitle information can greatly enrich the model’s context understanding capabilities. Especially in long video tasks, the information density of the speech modality provides the model with more clues and helps it generate more accurate answers. However, it should be noted that although speech information can improve model performance, it may also increase the risk of generating illusory content.

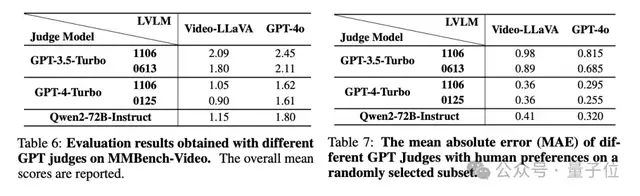

In terms of referee model selection, experiments show that GPT-4 has a more fair and stable scoring ability. It is highly resistant to manipulation, its scoring is not biased towards its own answers, and it can better align with human judges.

In contrast, GPT-3.5 is prone to high scoring problems, leading to distortion of the final results. At the same time, open source large language models, such as Qwen2-72B-Instruct, have also demonstrated excellent scoring potential, and their outstanding performance in alignment with human judges has proven that they have the potential to become an efficient model evaluation tool.

MMBench-Video currently supports one-click evaluation in VLMEvalKit.

VLMEvalKit is an open source toolkit designed for large-scale visual language model evaluation. It supports one-click evaluation of large visual language models on various benchmarks without the need for heavy data preparation, making the evaluation process easier. VLMEvalKit is suitable for the evaluation of image-text multi-modal models and video multi-modal models, and supports single-pair image-text input, interlaced image-text input, and video-text input. It implements more than 70 benchmark tests, covering a variety of tasks, including but not limited to image description, visual question answering, image subtitle generation, etc. The supported models and evaluation benchmarks are being continuously updated.

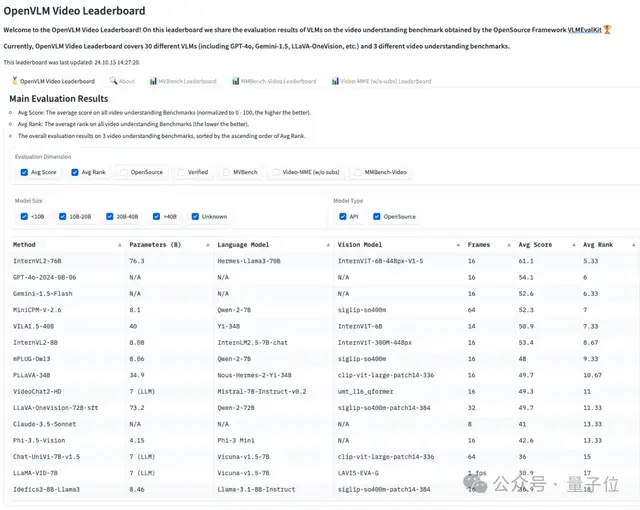

At the same time, based on the fact that the evaluation results of existing video multi-modal models are scattered and difficult to reproduce, the team also established the OpenVLM Video Leaderboard, a comprehensive video understanding ability evaluation list for the model. The OpenCompass VLMEvalKit team will continue to update the latest multi-modal large models and evaluation benchmarks to create a mainstream, open and convenient multi-modal open source evaluation system.

Finally, to summarize, MMBench-Video is a new long video, multi-shot benchmark designed for video understanding tasks, covering a wide range of video content and fine-grained ability assessment.

The benchmark test contains more than 600 long videos collected from YouTube, covering 16 major categories such as news and sports, and is designed to evaluate the spatiotemporal reasoning capabilities of MLLMs. Different from traditional video question and answer benchmarks, MMBench-Video makes up for the shortcomings of existing benchmarks in timing understanding and complex task processing by introducing long videos and high-quality manually annotated question and answer pairs.

By evaluating model answers with GPT-4, this benchmark demonstrates higher evaluation accuracy and consistency, providing a powerful tool for model improvement in the field of video understanding.

The launch of MMBench-Video provides researchers and developers with a powerful evaluation tool to help the open source community deeply understand and optimize the capabilities of video language models.

Paper link:

https://arxiv.org/abs/2406.14515

Github link:

https://github.com/open-compass/VLMEvalKit

HomePage:

https://mmbench-video.github.io/

MMBench-Video LeaderBoard:

All rights reserved. Any reproduction or use in any form without authorization is prohibited. Violators will be prosecuted.