Nvidia Uber Autonomous Driving Partnership

- Chipmaker Nvidia and ride-hailing giant Uber are teaming up to advance autonomous driving technology.

- The collaboration centers on Nvidia's Cosmos world foundational artificial intelligence (AI) model.

- The effort relies on Nvidia's DGX Cloud infrastructure and focuses on three key goals: achieving higher precision in simulation, speeding up post-training iterations, and ensuring more reliable model...

“`html

Nvidia and Uber Partner to Accelerate autonomous Driving with AI

Table of Contents

What Happened: The Nvidia-Uber Collaboration

Chipmaker Nvidia and ride-hailing giant Uber are teaming up to advance autonomous driving technology. This partnership, announced on August 29, 2024, sent Uber’s stock up 3.5% on Thursday afternoon.

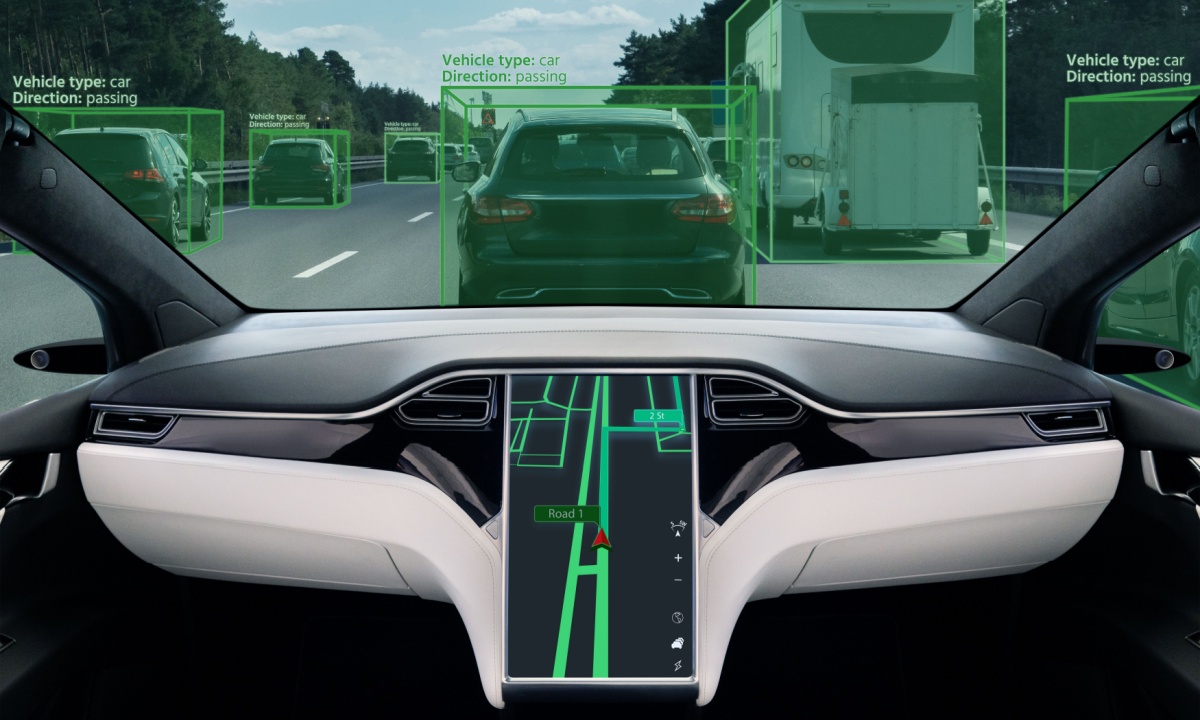

The collaboration centers on Nvidia’s Cosmos world foundational artificial intelligence (AI) model. This model will be trained using Uber’s extensive repository of real-world driving data, encompassing scenarios like airport pickups, complex intersections, and diverse weather conditions. By integrating this data, Nvidia aims to improve Cosmos’s ability to simulate and reason through unpredictable situations, ultimately reducing the testing period and enhancing performance in rare or extreme driving scenarios.

The effort relies on Nvidia’s DGX Cloud infrastructure and focuses on three key goals: achieving higher precision in simulation, speeding up post-training iterations, and ensuring more reliable model behavior in challenging conditions.

Understanding Nvidia’s Cosmos World and Foundation Models

Nvidia’s Cosmos World is a foundational AI model designed to simulate and understand complex environments.Foundation models, unlike customary AI models trained on limited datasets, can leverage internet-scale knowledge.This allows vehicles to generalize from vast amounts of training data, improving their ability to handle unforeseen circumstances.

the key advantage of foundation models lies in their ability to learn representations of the world that are transferable across different tasks. In the context of autonomous driving, this means a model trained on simulated data can more effectively adapt to real-world conditions, and vice versa. This reduces the need for extensive and costly real-world testing.

Nvidia detailed their roadmap for AI-enabled driving in an October 20, 2023, blog post, “How AI Is Unlocking Level 4 Autonomous Driving.” The post highlights how converging AI advancements are making high automation commercially viable.

The Role of Uber’s real-World Driving Data

Uber’s contribution to this partnership is its massive dataset of real-world driving experiences. This data is invaluable for training and validating autonomous driving systems. The data includes:

- Diverse Road Conditions: Data from various cities and countries, representing different road layouts, traffic patterns, and infrastructure.

- Complex Scenarios: Recordings of challenging situations like navigating busy intersections, merging onto highways, and responding to unexpected pedestrian or cyclist behavior.

- Weather variability: Data collected in a range of weather conditions, including rain, snow, fog, and luminous sunlight.

- Edge Cases: Rare but critical events that require refined decision-making, such as emergency vehicle encounters or sudden obstacles.

Feeding this data into Cosmos allows Nvidia to refine the model’s ability to handle these complex scenarios, making it more robust and reliable.

Impact and Implications for Autonomous Driving

This partnership has notable implications for the future of autonomous driving. By combining Nvidia’s AI expertise with Uber’s real-world data, the companies aim to accelerate the development and deployment of Level 4 autonomous vehicles – vehicles capable of handling all driving tasks in specific conditions.

Level 4 Autonomy: A Closer Look

Level 4 autonomy represents a significant step towards fully